Axios AI+ Government

May 15, 2026

Morning! Today we've got a look at the conflicts with China that matter most in the AI race, and yes, we will definitely accept your praise for thinking of this story while President Trump is in China.

👀 ICYMI: Disagreement among administration officials and a time crunch with President Trump's China summit are holding up efforts to launch a federal response to the next frontier of AI. Read the story.

Today's newsletter is 1,289 words, a 5-minute read.

1 big thing: 3 big conflicts in race against China

Three major conflicts are shaping America's AI race against China in real time, with changing global dynamics shifting the terms week by week:

- The race with China on advancing AI models

- Conflicting federal vs. state laws in the U.S.

- European AI policy

Why it matters: All three factors are fraught with politics and delicate dynamics, and all will help determine whether the U.S. continues to lead the world in advanced AI.

What they're saying: AI leaders recognize that the technology is so big that it requires global cooperation, even with the fears that China is stealing U.S. technology or is not aligned with U.S. values.

- That's an especially relevant topic during President Trump's China summit this week.

- AI "transcends a lot of the prevailing or traditional trade type issues," Chris Lehane, OpenAI's vice president of global affairs, told reporters in Washington this week.

- He proposed a global governance system for AI which could include China: "There is an opportunity to really start to build something up globally, and have countries around the world, including China, potentially participate."

The race with China: But U.S. officials have to navigate cutthroat competition while recognizing that communicating about safety with the other world leaders on AI is important.

- They also have to decide what advanced technology the Chinese market will have access to, a subject that divides Washington.

- U.S. officials are walking that tightrope this week: Treasury Secretary Scott Bessent told CNBC Thursday that the U.S. and China would establish some sort of protocol for AI safety, but that doing so was only possible because the U.S. currently holds the lead in the technology.

- The U.S. lead may be slim, though: A Commerce Department analysis found DeepSeek V4 Pro, a leading Chinese model, is only about eight months behind the U.S. frontier.

In a blog post Thursday, Anthropic argued for stricter export controls and steeling against Chinese Communist Party intellectual property theft to strengthen U.S. leadership in AI.

- In addition, Democrats and Republicans on the Hill are urging Trump officials to be careful while in China.

- Sen. Chris Coons (D-Del.) argued against allowing China access to Nvidia's most advanced chips.

- Sen. Jim Banks (R-Ind.) wrote in a letter to administration officials that AI policy is a "particularly difficult domain" due to the "dual mandate of winning the AI race against [China] while navigating critical security challenges along the way."

Story continues below ...

2. Why state laws and European policies matter

AI companies are also navigating a patchwork of state laws and the landscape of European rules in the race against China.

Driving the news: The leading AI companies (and less-resourced AI startups), along with the Trump administration, have pushed for one federal AI standard, arguing that conflicting state-level laws will impede AI companies' advancement.

- But as state-level AI frontier safety laws have started to mirror one another, the leading labs are learning to love what OpenAI's Lehane has called "reverse federalism," or letting states lead on policy by passing similar laws.

- Both OpenAI and Anthropic came out in support this week of SB 315, an Illinois bill similar to laws in California and New York that would require safety reports from AI labs.

- "If you get a bunch of the big states to effectively agree to this, you would de facto create a national standard," Lehane said.

Between the lines: The leading AI labs are happy with a "de facto" national standard because it's something they can live with, knowing the political realities of a national standard are much tougher to achieve.

- At the same time, battling super PACs aligned with Anthropic and OpenAI are dropping millions on candidates to shape these very battles.

Friction point: While leading AI labs and monied super PACs throw money at shaping the policy landscape, the politics are getting worse: A new University of Pennsylvania poll finds that "only 17% of Americans believe AI will have a positive impact on the United States over the next decade."

- "Progress" in AI, as defined by the labs, is colliding with political realities and having to grapple with what people actually want from AI.

Then there's Europe: The EU's AI Act, along with European policies such as the Digital Markets Act and Digital Services Act, are tripping up American tech and AI companies with rules and fines as they try to dominate overseas markets.

- But Europe is also eager both to be an attractive place for AI startups to flourish and to have access to the most advanced models, including Anthropic's Mythos.

- So it has had to soften a bit, to the advantage of the U.S.: The European Parliament this month agreed to water down and delay its AI rules.

- European red tape will always be an impediment for U.S. tech companies, but the pace of AI advancements could give them some temporary reprieve.

The bottom line: What's good for AI advancement is only sometimes good for geopolitics or voter sentiment.

3. Scoop: Lawmakers press White House to act on cyberthreats

Congress is urging the White House to respond to a growing AI cybersecurity threat: advanced models that can uncover software vulnerabilities faster than companies and governments can patch them.

Why it matters: A bipartisan letter, shared first with Axios, marks an escalation in pressure on the Trump administration to confront the risks posed by frontier AI cyber models like Anthropic's Mythos.

Driving the news: A bipartisan group of 32 House lawmakers wrote to National Cyber Director Sean Cairncross urging immediate action to confront the high volume of cyber vulnerability disclosures cropping up from advanced AI systems.

- In the letter led by Rep. Bob Latta (R-Ohio), the lawmakers call for expanded defensive access to tools like Mythos and OpenAI's GPT-5.5-Cyber, and ask whether the federal government can help software companies validate and patch vulnerabilities discovered by the systems.

What they're saying: "AI can empower defenders to discover a multitude of serious vulnerabilities, but the corresponding disclosure, validation, patching, and deployment efforts may struggle to keep pace," the lawmakers wrote.

- "The lesson from Mythos is not limited to cybersecurity or to just one company," they added.

- "Regardless of how quickly one expects AI capabilities to advance, when important capabilities appear, federal agencies must be able to recognize them and respond quickly."

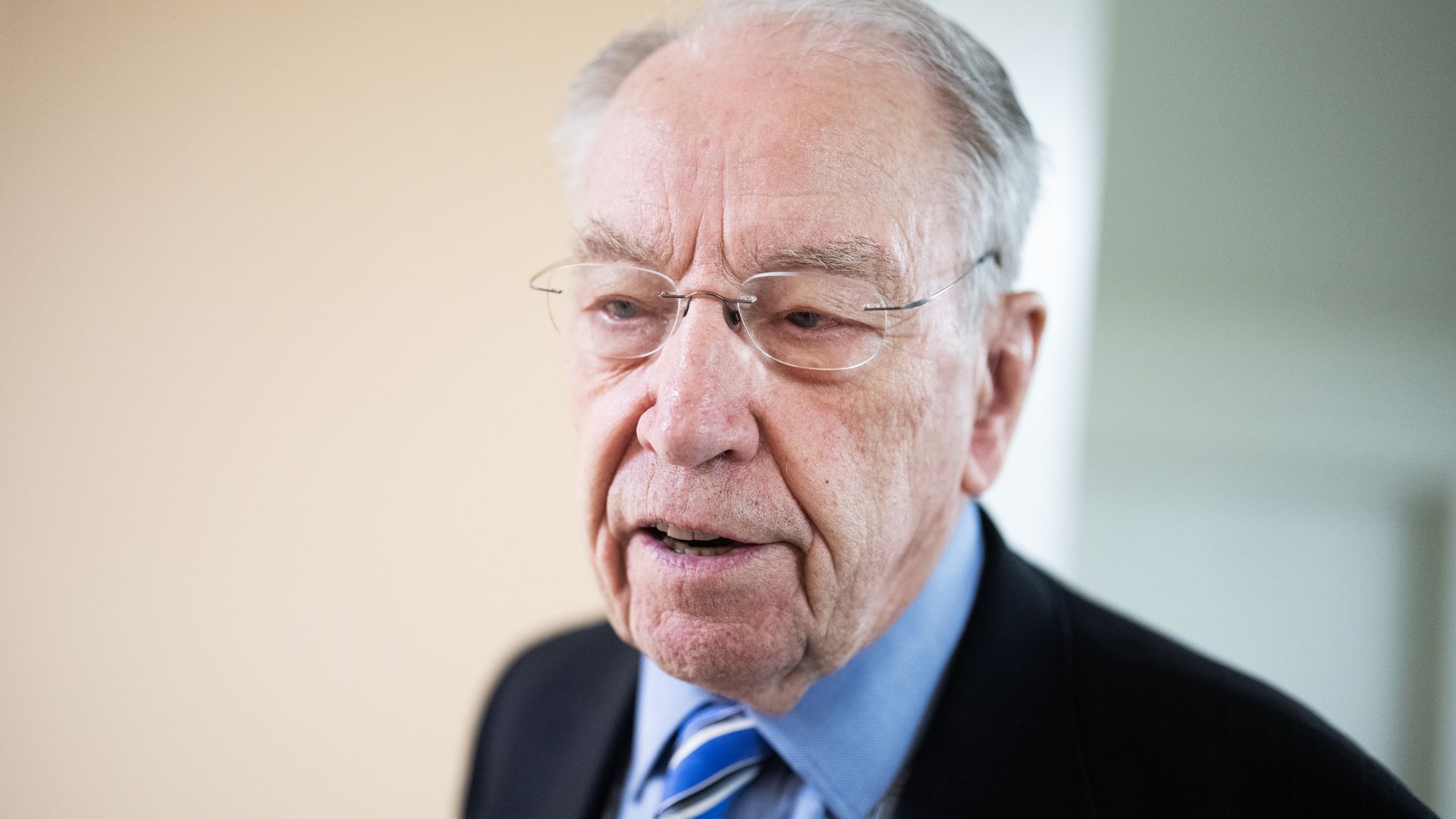

4. Scoop: Tech CEOs summoned to Capitol for June hearing

The CEOs of Meta, Alphabet, TikTok and Snap have been invited back to Capitol Hill for a broad oversight hearing in June, Axios has learned.

- Senate Judiciary Committee Chairman Chuck Grassley is planning a June 23 hearing titled "Examining Tech Industry Practices and the Implications for Users and Families: Is This Social Media's Big Tobacco Moment?" per committee spokesperson Hannah Akey.

- The hearing will look at Big Tech and AI safety oversight, Akey said, along with other issues like whistleblower retaliation.

Why it matters: The hearing comes as social media and AI companies are facing mounting lawsuits, including some first-time losses, and Capitol Hill gets closer to passing kids' online safety legislation.

- Social media CEOs also haven't testified in Congress since 2024, when they appeared before the Judiciary Committee to discuss many of the same topics.

Details: The committee has not received any formal RSVPs from the CEOs yet, Akey said, but discussions are ongoing.

- People deserve to hear directly from CEOs, Akey said, following a Judiciary subcommittee hearing this week featuring parents pushing for stricter online legislation.

Thanks to David Nather for editing and Matt Piper for copy editing.

Sign up for Axios AI+ Government