A digital breadcrumb trail for deepfakes

Add Axios as your preferred source to

see more of our stories on Google.

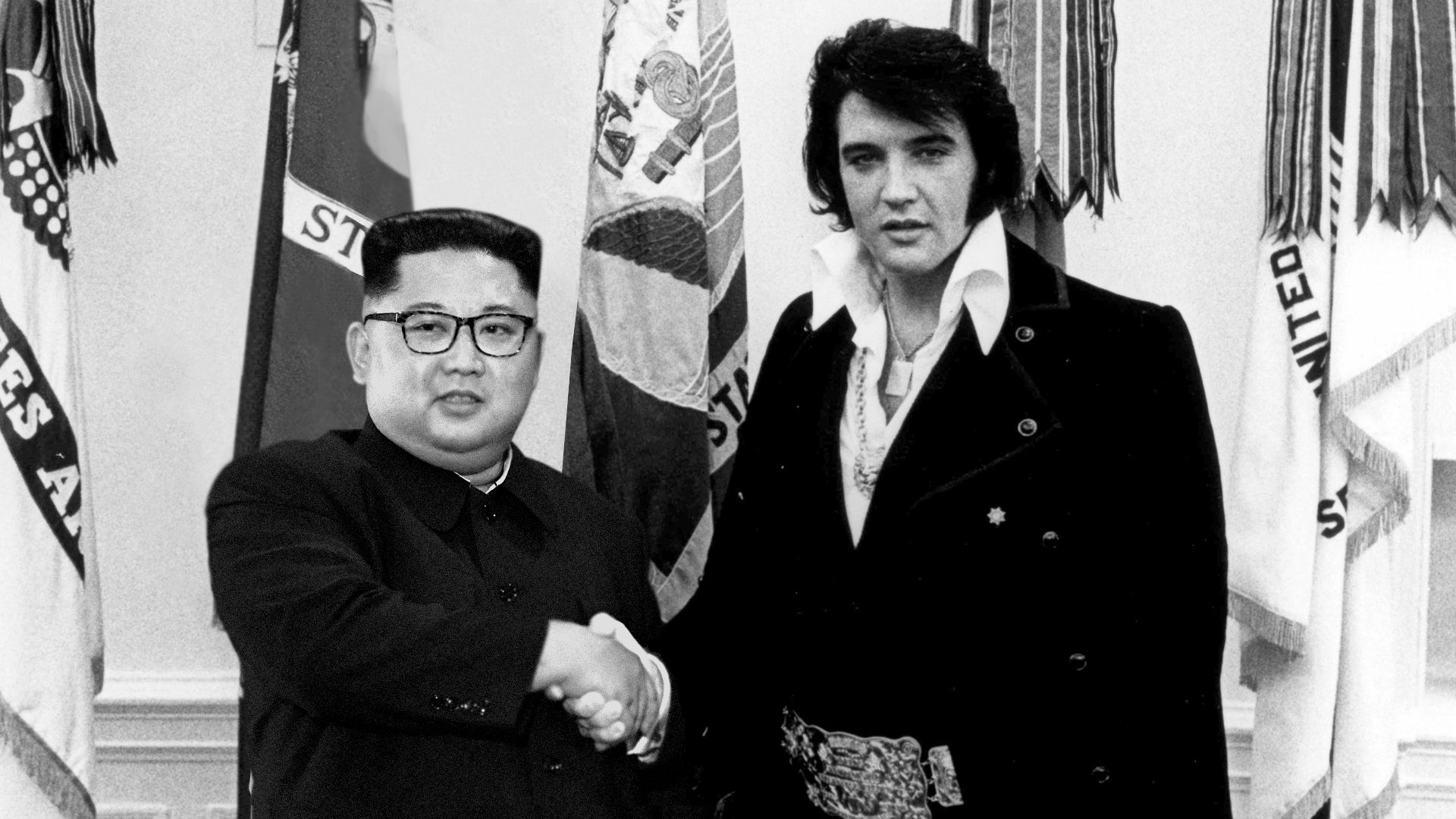

Altered image: Lazaro Gamio/Axios

There is a pitched struggle underway between the makers of fake AI-generated videos and images and forensics experts trying desperately to uncover them. And the detectives are losing.

Why it matters: Their effort is the leading edge in a massive scramble to stave off a potential landscape in which it's impossible to know what's true and what isn't.

Experts are developing methods to verify photos and videos at the precise moment they're taken, leaving no room for doubt about their authenticity. This portends a cynical future in which media must leave a detailed digital breadcrumb trail in order to be believed.

- Some worry that if authentication becomes the default, people without access to verification technology — or who can't give up sensitive information about their location — will lose out.

- One possible outcome: a bifurcated world in which some photos and videos, published by those who can afford the tools and visibility, are accompanied by a green checkmark — but other media languish in obscurity and doubt.

"My concern is that if they actually achieve their end-state goal that they describe, that might work against people who are already marginalized, and might perpetuate data surveillance," says Sam Gregory, a program manager at the human-rights nonprofit WITNESS.

Where it stands: The consensus today is that detecting deepfakes after they've been created is a stopgap — not a permanent solution.

- With billions of photos now uploaded to social media every day — and deepfakes becoming increasingly easy to make — catching forgeries needs automated detection tools, which are unlikely to ever catch even the majority of fakes.

- "I don't believe forensics can work in the long run," says Pawel Korus, a professor of engineering at NYU. "It was never reliable enough to begin with, and it's starting to break as cameras are doing more and more interesting things."

What's happening: The main alternative is to verify a photo or video at the source, using unique information about the specific camera that's taking it.

- The ultimate vision is a universal indicator of veracity to accompany photos and videos on Facebook, YouTube, and other social media.

- But in this future, the all-important imprimatur of truth may not be in everyone's reach.

- "The people who will be de facto excluded in a system of authentication will be people who are in the Global South, use a jailbroken phone, probably are women, probably are in rural areas," Gregory tells Axios.

Several startups are working on this nascent technology.

- TruePic, a venture-backed startup, wants to work with hardware manufacturers — Qualcomm, for now — to log of photos and videos the instant they're captured.

- Amber, a small San Francisco startup, sends an encrypted record of photos and videos to a blockchain, so viewers can check if clips were later altered.

- Serelay, based in the U.K., saves about 100 phone sensor readings every time you snap a photo — GPS, pressure sensor, gyroscope, etc. — to check its veracity.

What's next: All three companies told Axios that a widespread built-in verification system is still years away. For now, they are working with industries that need to be able to trust incoming videos and photos — TruePic with insurers, Amber with body camera makers, and Serelay with media companies.