Aug 29, 2020 - Technology

Amazon's new Halo health wearable device will be able to track your emotional tone

Add Axios as your preferred source to

see more of our stories on Google.

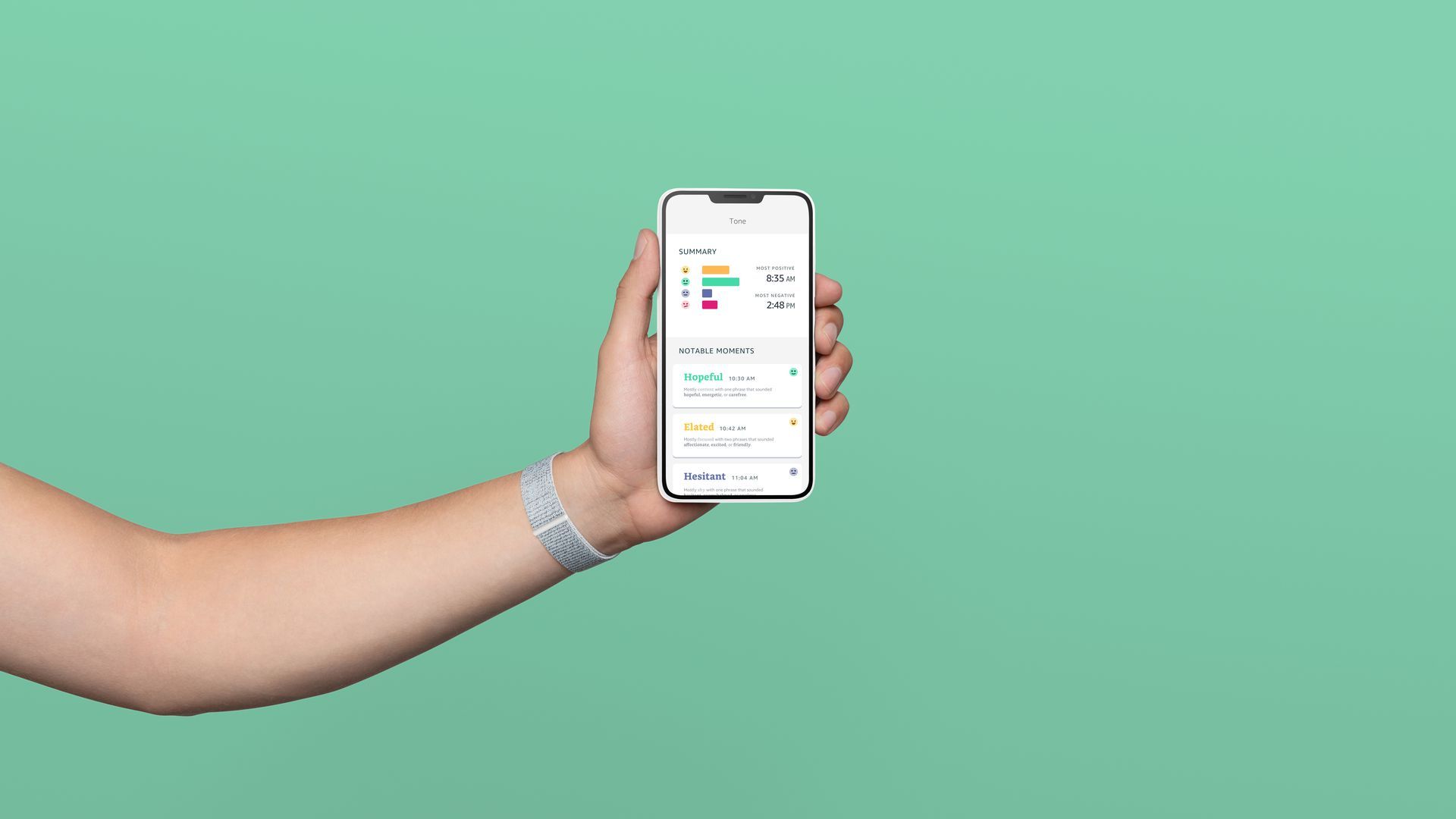

Amazon's Halo wearable, with Tone feature enabled on a smartphone. Photo courtesy of Amazon.