OpenAI makes default ChatGPT more personal

Add Axios as your preferred source to

see more of our stories on Google.

OpenAI

OpenAI is making the default ChatGPT more accurate and more personal — changes that could increase people's reliance on it, while also increasing its access to their lives.

Why it matters: Even subtle changes to a chatbot's tone, accuracy or memory can trigger backlash.

Driving the news: OpenAI updated ChatGPT's default model to respond with more accuracy, more personalization and fewer gratuitous emoji, the company said Tuesday.

- GPT-5.5 Instant is rolling out to all ChatGPT users and to the API.

Enhanced personalization is initially for Plus and Pro on the web.

- Free, Go, Business and Enterprise will come later.

- Paid users can keep GPT-5.3 Instant for three months.

By the numbers: OpenAI says GPT-5.5 Instant produced 52.5% fewer hallucinated claims than GPT-5.3 Instant on high-stakes prompts in areas like medicine, law and finance.

- It also reduced inaccurate claims by 37.3% in especially challenging conversations users had flagged for factual errors.

Zoom in: The new model "matches the scale of the task," the company said in a blog post Tuesday.

- For casual advice prompts, the new version avoids unnecessary follow-up questions and formatting that made responses feel cluttered.

Between the lines: Instant will also draw on more of a user's context in responses to prompts.

- That includes information from past chats, files users have uploaded and Gmail, if connected.

Reality check: OpenAI says this means users won't have to repeat themselves as often, but not everyone wants a chatbot with persistent memory.

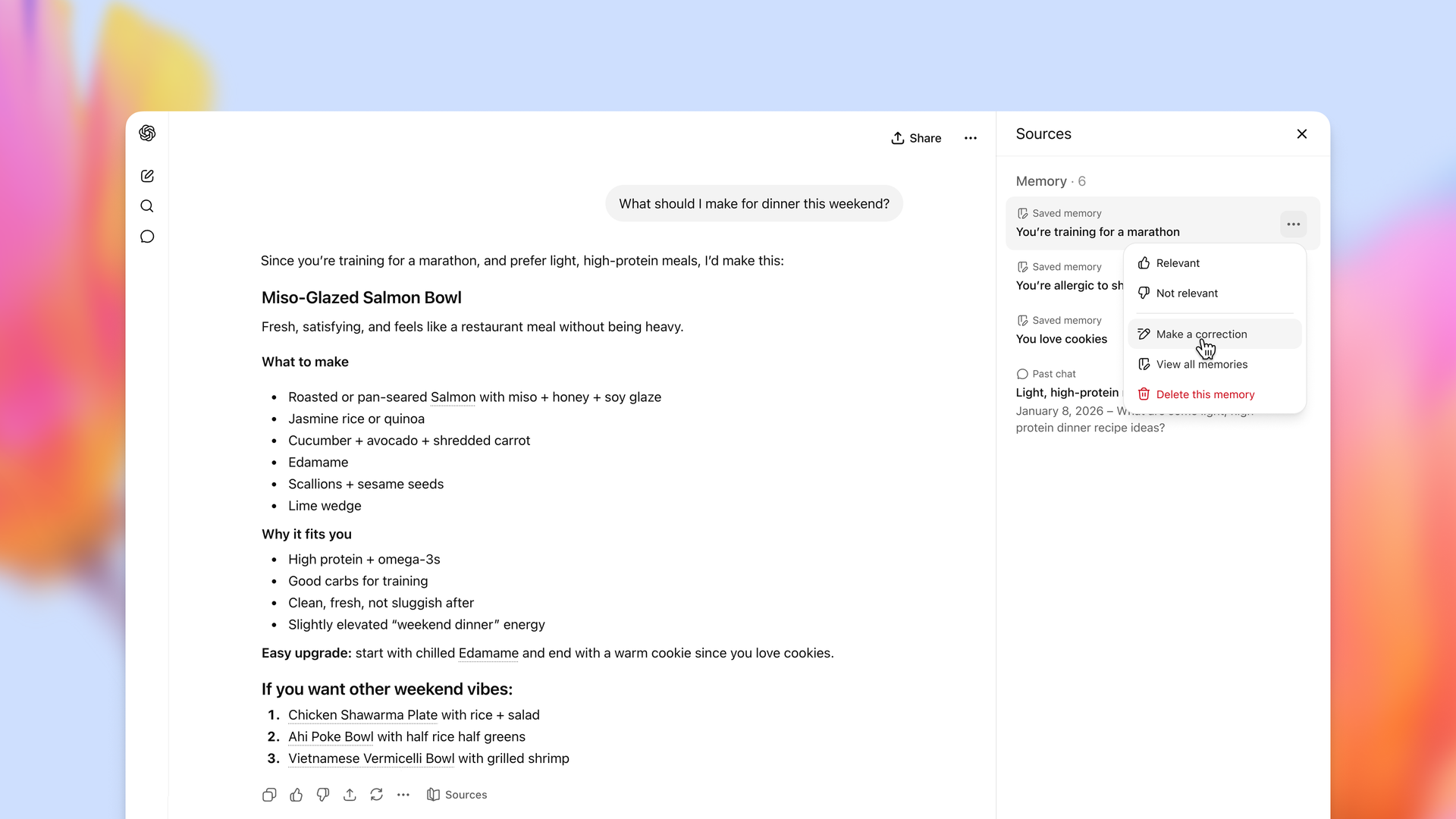

- The company is also adding "memory sources," a control that shows users some of the context ChatGPT used to personalize an answer, such as saved memories or past chats.

- OpenAI says users can delete or correct outdated memory and use temporary chats that do not use or update memory.

Yes, but: The increased memory serves as a reminder that prompts may be stored by AI services, depending on the service and settings.

- Connecting your chatbot to third-party services means putting more of your personal or work information at risk.

- The tradeoff between efficiency and privacy online has been a constant battle ever since there was an internet.

Zoom out: Lower hallucination rates can also create new problems.

- Users may trust answers more even when the model is still capable of getting things wrong.

What we're watching: Whether OpenAI's new memory-source controls are enough to reassure users who want more personalized answers without feeling watched.