YouTube expands deepfake detection tool to politicians and journalists

Add Axios as your preferred source to

see more of our stories on Google.

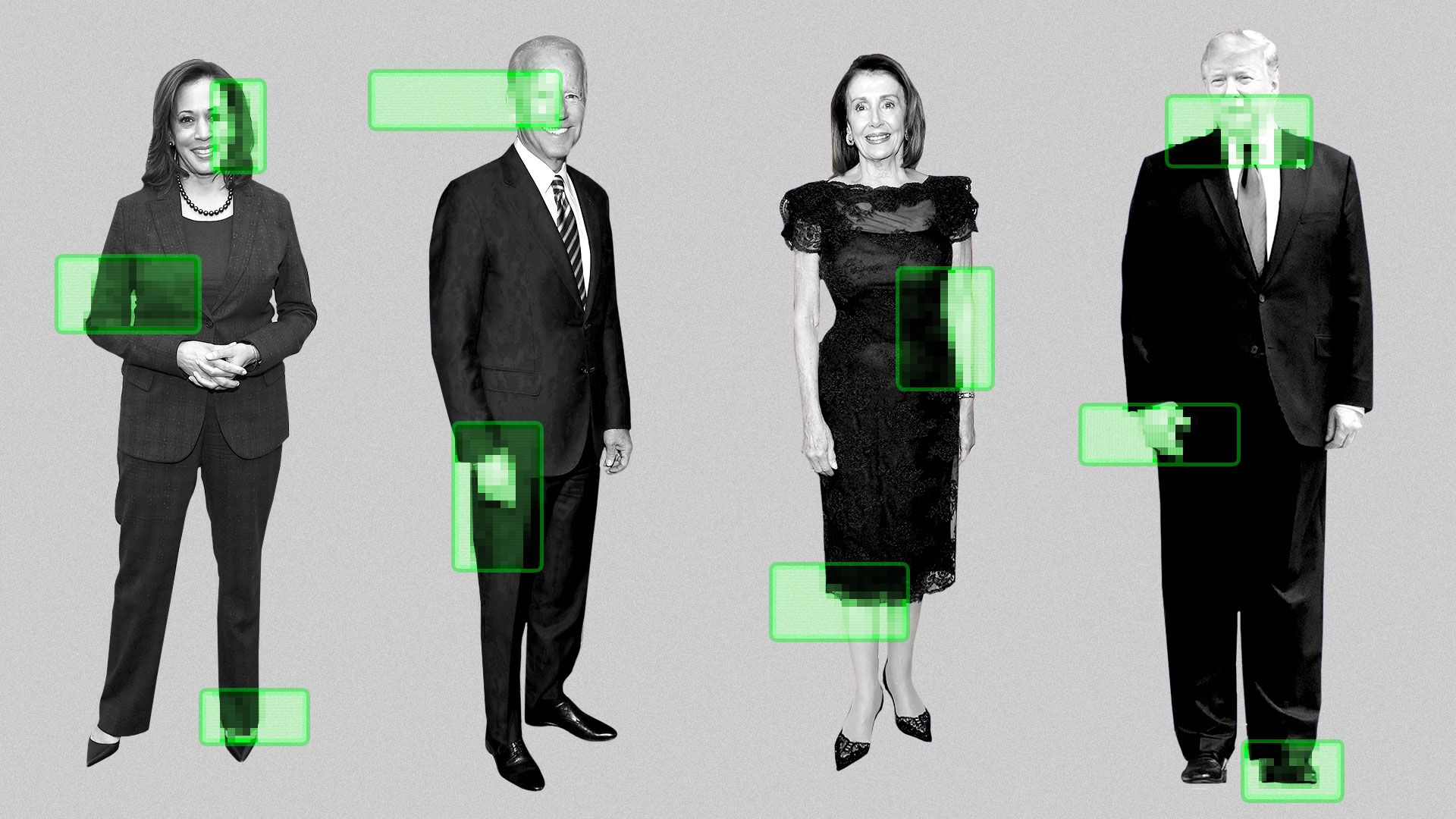

Illustration: Aïda Amer/Axios

YouTube is expanding a tool that detects impersonations to a select group of government officials, political candidates and journalists.

Why it matters: New AI systems are exacerbating the problem of deepfakes, making it easier to create convincing videos of real people, including President Trump.

What they're saying: "This expansion is really about the integrity of the public conversation," Leslie Miller, vice president, government affairs and public policy at YouTube, said on a call with journalists.

- "We know that the risks of AI impersonation are particularly high for those in the civic space."

How it works: YouTube's likeness detection technology scans videos uploaded to the platform for content that appears to use someone's likeness, namely their face.

- If a match is detected, individuals can review the flagged video and request to have it removed through YouTube's privacy complaint process.

- Requests don't guarantee the video will be taken down. YouTube allows parody and satire.

- YouTube declined to share who exactly has access in this pilot, including when asked specifically about President Trump. Participants must verify their identity by submitting a government ID and video selfie.

The big picture: Tech companies are creating more safeguards against AI and impersonation.

- YouTube CEO Neal Mohan said one of his top 2026 priorities is AI transparency and protections, including labeling AI content and removing harmful synthetic media.

- YouTube started developing the likeness detection tool in 2024 with Creative Artists Agency and testing with top creators, including MrBeast and Marques Brownlee. It expanded access to all creators last year.

Between the lines: YouTube said creators using the tool over the past year have flagged relatively few videos for removal.

- "Most of it turns out to be fairly benign or additive to their overall business," said Amjad Hanif, vice president of creator products at YouTube.

What's next: YouTube plans to expand access to any government official, political candidate and journalist.

- The likeness detection tool is focused on facial likeness, for now. But Hanif said the company is also exploring voice impersonation.

- YouTube is also considering allowing people to monetize their likeness in detected content, as is the case for its Content ID system.

💭 Thought bubble from Axios senior tech policy reporter Ashley Gold: Deepfakes and AI impersonation are areas of concern for Congress and the Trump administration. Last year, Trump signed the TAKE IT DOWN Act, which has to do with nonconsensual intimate images (including deepfaked ones).

- However, any more sweeping deepfake or election labeling legislation would be a much heavier lift on the Hill and is not likely prior to the midterms.

What to watch: YouTube endorsed a federal bill called the NO FAKES Act, where platforms must act quickly when receiving takedown requests for AI-generated likeness.

- "We think that bill provides a critical blueprint across the U.S. and worldwide to ensure that technology always serves human creativity, not the other way around," Miller said.