Pentagon blacklists Anthropic, labels AI company "supply chain risk"

Add Axios as your preferred source to

see more of our stories on Google.

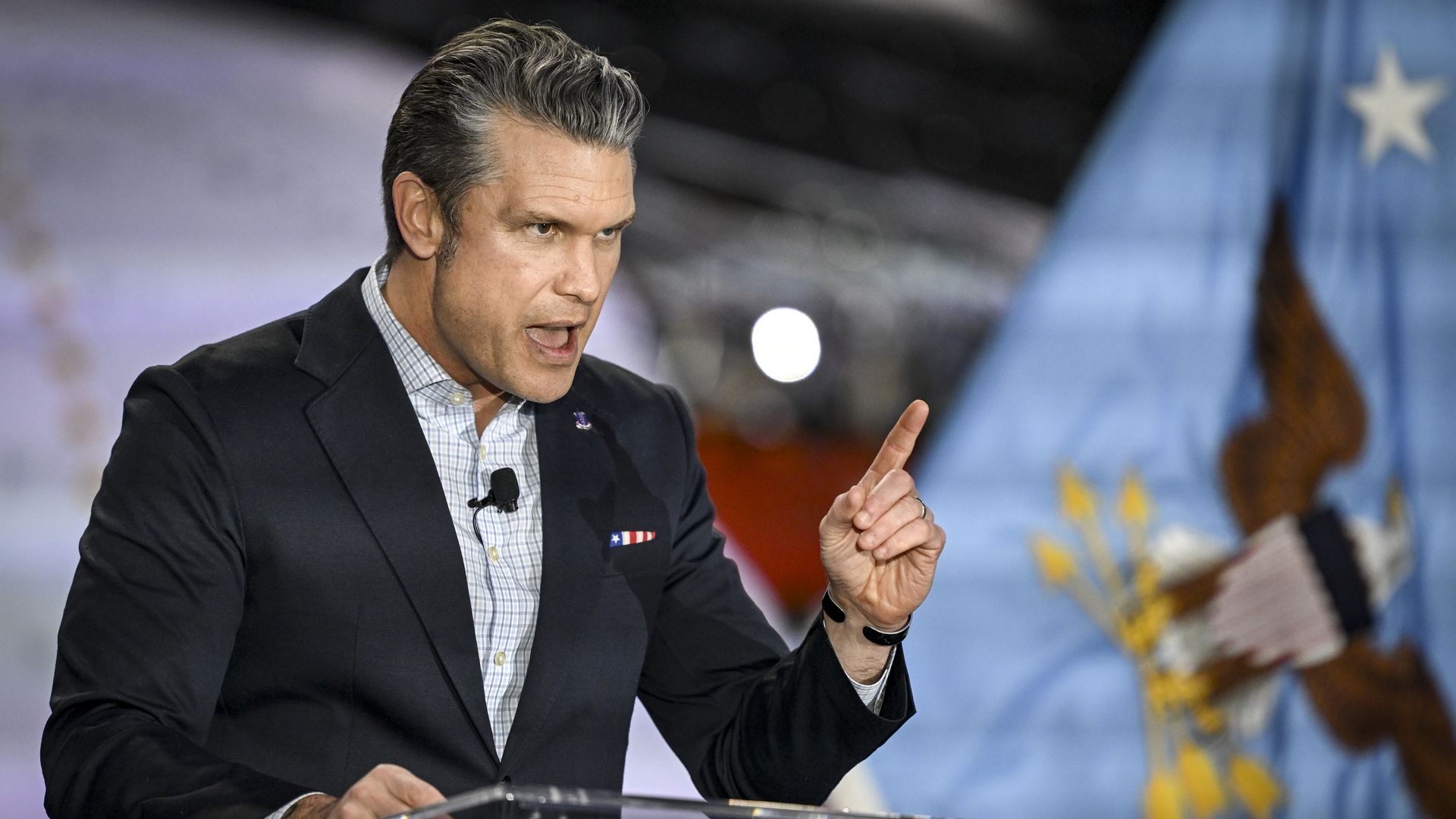

Defense Secretary Pete Hegseth. Photo: Aaron Ontiveroz/The Denver Post

President Trump said Friday the U.S. government would blacklist Anthropic, and the Pentagon declared the company a "supply chain risk," in the most consequential and controversial policy decision to date at the intersection of artificial intelligence and national security.

The big picture: Anthropic rebuffed the Pentagon's demand to lift all safeguards on the military's use of its model, Claude, due to its concerns about the use of AI for mass domestic surveillance and the development of weapons that fire without human involvement.

- For that, the government will now impose a penalty usually reserved for companies from adversarial countries, such as Chinese tech giant Huawei.

- By Friday evening, Anthropic said it would challenge any "supply chain risk" designation in court, and rejected Hegseth's claim that military contractors would be barred from working with the company.

Behind the scenes: Defense undersecretary Emil Michael was on the phone offering Anthropic a deal just as Defense Secretary Pete Hegseth tweeted the company would be designated a supply chain risk, a source familiar told Axios.

- That deal would have required allowing the collection or analysis of data on Americans, from geolocation to web browsing data to personal financial information purchased from data brokers, the source added.

The Pentagon has quickly moved on to OpenAI, another source familiar said, even though the company has similar red lines and a robust safety stack to enforce them.

- It's unclear whether the Pentagon is still insisting on the legal collection of public personal data with OpenAI.

Breaking it down: Following Trump and Hegseth's announcements, the Pentagon will move to sever its contract with Anthropic — valued at up to $200 million — and require companies it works with to certify they don't use Claude in their workflows.

- There will be a six-month wind-down period to allow the Pentagon, its customers and other government agencies to onboard alternatives to Claude.

- "I am directing EVERY Federal Agency in the United States Government to IMMEDIATELY CEASE all use of Anthropic's technology," Trump wrote on Truth Social.

Trump's announcement is particularly extraordinary because Claude is the only AI model currently used in the military's classified systems.

- It was used in the operation to capture Nicolás Maduro and could conceivably be used in a potential military operation in Iran.

- Defense officials praised Claude's capabilities in conversations with Axios, with one admitting it would be a "huge pain in the ass" to disentangle.

- The decision is also complicated for AI software firm Palantir, which uses Claude to power its most sensitive work with the military and will likely now need to strike a deal with one of Anthropic's competitors.

What they're saying: "The Leftwing nut jobs at Anthropic have made a DISASTROUS MISTAKE trying to STRONG-ARM the Department of War, and force them to obey their Terms of Service instead of our Constitution," Trump wrote.

Between the lines: Trump's declaration came one day after Anthropic CEO Dario Amodei rejected what the Pentagon had called its "best and final offer," stating "we cannot in good conscience accede to their request."

- Emil Michael, the senior Pentagon official who has been steering the negotiations with Anthropic and other AI firms, responded by calling Amodei a "liar" with a "God complex" who was "ok putting our nation's safety at risk."

The Pentagon argues that there are many gray areas around what constitutes mass surveillance or autonomous weaponry, and that it's unworkable to have to litigate individual cases with a private company.

- Their position is that once the military buys a tool, it has its own standards and procedures to determine whether and how to use it. They therefore demanded all AI firms make their models available for "all lawful purposes."

The intrigue: Anthropic has not yet said whether it will attempt to fight the designation in court.

- The company has been growing at a staggering rate and establishing dominance in some key enterprise use cases for AI.

- Amodei and colleagues have garnered widespread praise in the past 24 hours for their principled stand. It's not yet clear how expensive that stand will be.

What to watch: Elon Musk's xAI recently signed an agreement to let the military use its model, Grok, in classified systems. Sources say it's unlikely to be a like-for-like replacement for Claude.

- Google's Gemini and OpenAI's ChatGPT are both available in unclassified systems, and the Pentagon is accelerating conversations about bringing them into the classified space.

- Hundreds of employees from Google and OpenAI have signed a petition in the past 24 hours calling on their companies to mirror Anthropic's position.

Editor's note: This story was updated with details on the Pentagon's talks with Anthropic and with Anthropic's threat to take the Pentagon to court.