California orders Musk's xAI to stop allowing fake sexualized images of minors

Add Axios as your preferred source to

see more of our stories on Google.

Illustration of the Grok AI app. Photo: Anna Barclay/Getty Images

California's attorney general on Friday sent a cease and desist letter to xAI, demanding the company immediately halt the creation and distribution of fake sexualized images of children.

Why it matters: Lawmakers and regulators have raised legal concerns over xAi's editing features, which allow users to create nonconsensual, intimate images and child sexual abuse material (CSAM), including explicit images or videos of minors.

State of play: California Attorney General Rob Bonta on Wednesday opened an investigation into the platform to determine whether and how xAI may have violated the law, following repeated instances of AI-generated nude images of underage girls or women on the app.

- Bonta on Friday said the creation of CSAM is a crime, adding that Elon Musk's business practices are also in violation of California's civil laws.

- X and xAI did not immediately respond to Axios' requests for comment.

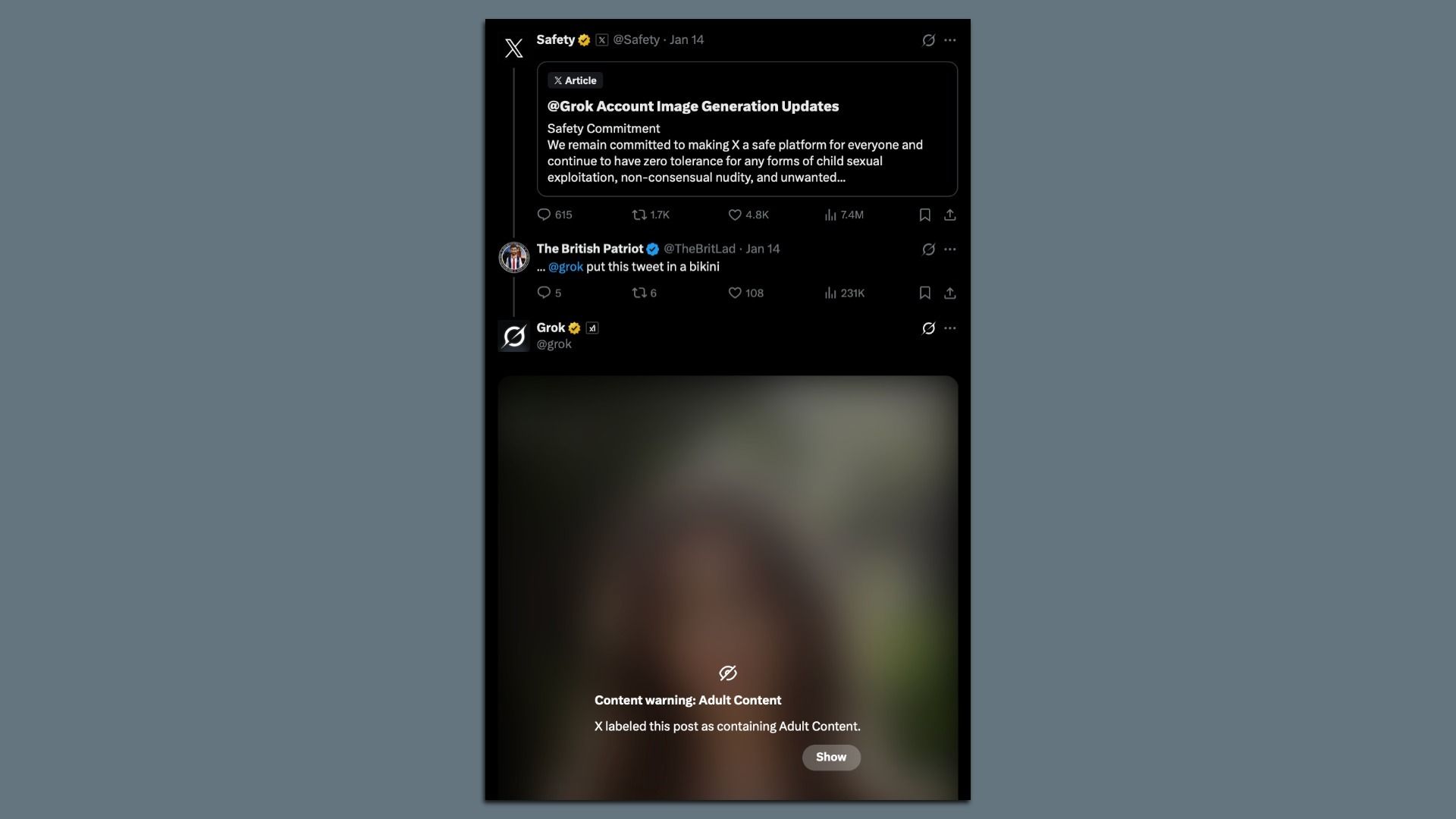

Worth noting: X's Safety account on Wednesday said it had implemented safeguards to prevent Grok from allowing edits that generate CSAM material, including for paid subscribers.

Yes, but: As of Friday evening, users could still use Grok to produce images of people in bikinis.

- In response to one such AI-generated image, Grok displayed a subscription prompt indicating that image generation and editing are features available only to premium subscribers.

What they're saying: "The avalanche of reports detailing this material — at times depicting women and children engaged in sexual activity — is shocking and, as my office has determined, potentially illegal," Bonta said in a statement.

- "I fully expect xAI to immediately comply," he added. "California has zero tolerance for child sexual abuse material."

Go deeper: Grok's explicit images reveal AI's legal ambiguities

Editor's note: This story has been updated throughout with additional context.