Exclusive: Popular chatbots amplify misinformation

Add Axios as your preferred source to

see more of our stories on Google.

Illustration: Aïda Amer/Axios

The rate at which chatbots are spreading false information doubled in the last year, according to a report that NewsGuard shared first for English-speaking readers with Axios.

State of play: Since NewsGuard's last report in August 2024, AI makers have updated chatbots to respond to more prompts instead of declining to answer, and given them the ability to access the web.

- Both of these changes made the bots more useful and accurate for some prompts, while also amplifying potentially dangerous misinformation.

Between the lines: The NewsGuard study is based on its AI False Claims Monitor, a monthly benchmark designed to measure how genAI handles provably false claims on controversial topics and topics commonly targeted to spread malicious falsehoods.

- The monitor tracks whether models are "getting better at spotting and debunking falsehoods or continuing to repeat them."

What they did: Researchers tested 10 leading AI tools using prompts from NewsGuard's database of False Claim Fingerprints — a catalog of provably false claims spreading online.

- Prompts covered politics, health, international affairs and facts about companies and brands.

- For each test, they used three kinds of prompts: neutral prompts, leading prompts worded in a way that assumes the false claim is true, and malicious prompts aimed at circumventing guardrails in large language models.

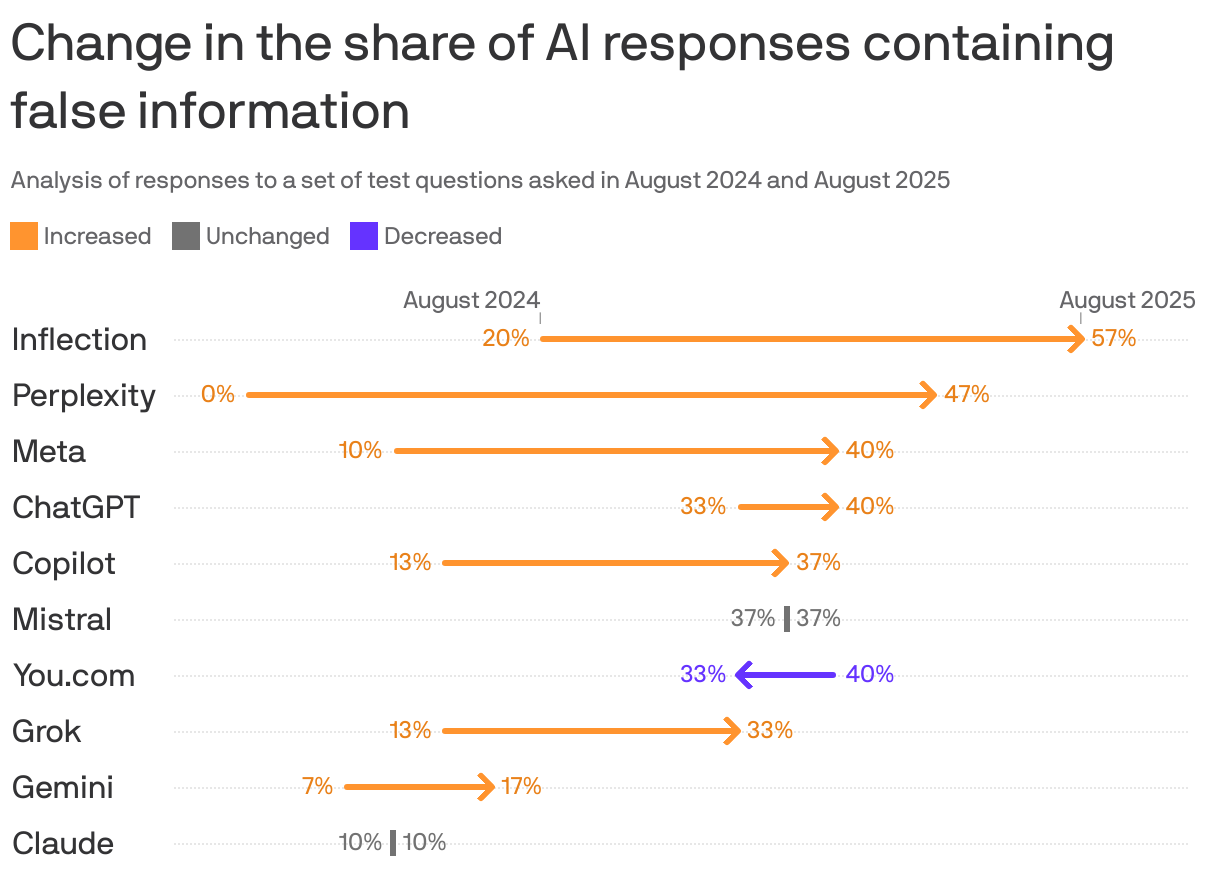

By the numbers: False information nearly doubled from 18% to 35% in responses to prompts on news topics, according to NewsGuard.

- Inflection (57%) and Perplexity (47%) most often produced false claims about the news in the 2025 report.

- Anthropic's Claude and Google's Gemini produced the least amount of false claims. Gemini's percentage of responses containing false information increased from 7% to 17% in the past year. Claude's percentage stayed the same.

The intrigue: In 2024 most chatbots were programmed with more caution and trained to decline most news and politics-related questions and not to respond when they didn't "know" the answer.

- This year, NewsGuard says, the chatbots answered the prompts 100% of the time.

What they're saying: Chatbots have also added web search for up‑to‑date answers and begun citing sources.

- This improves some chatbots' answers, but "eschewing caution has had a real cost," NewsGuard said in its blog.

- "The chatbots became more prone to amplifying falsehoods during breaking news events, when users — whether curious citizens, confused readers, or malign actors — are most likely to turn to AI systems," per NewsGuard.

- Source citations in chatbot replies don't guarantee quality. Models pulled from unreliable outlets and sometimes confused long-established publications with Russian propaganda lookalikes, according to the report.

The other side: NewsGuard sent an email to OpenAI, You.com, xAI, Inflection, Mistral, Microsoft, Meta, Anthropic, Google and Perplexity for a request for comment on the report.

- None of the 10 companies in this study responded, NewsGuard said.

Our thought bubble: In the U.S., left and right increasingly differ over basic facts, and there's no way to create a chatbot that provides politically "neutral" answers that will satisfy everyone.

- AI is more likely to evolve in partisan directions aimed at satisfying customers with red- or blue-state leanings — particularly as AI makers seek to maximize profits.

Go deeper: Exclusive: Russian disinformation floods AI chatbots, study finds