Confusing school AI policies leave families guessing

Add Axios as your preferred source to

see more of our stories on Google.

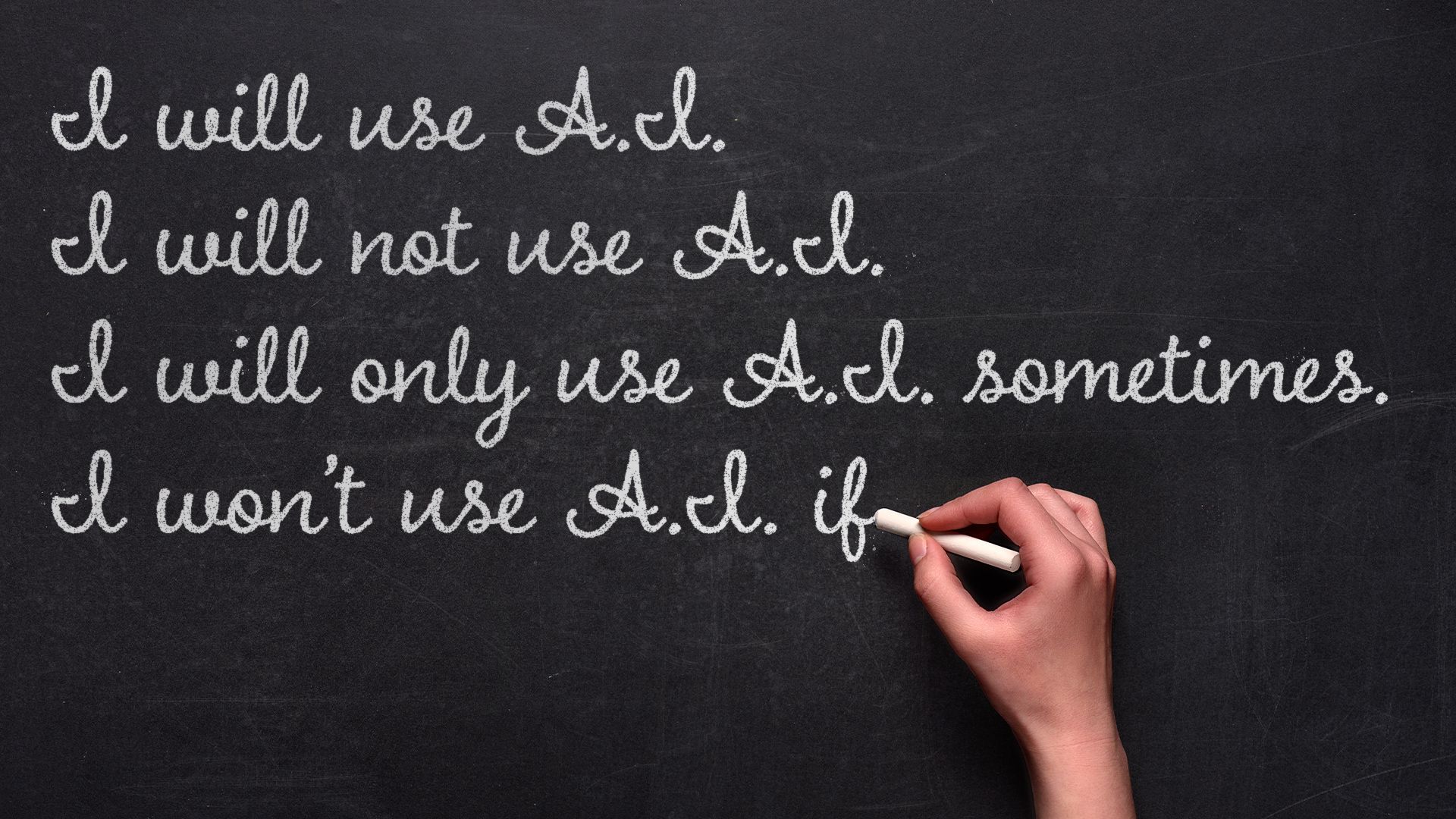

Illustration: Aïda Amer/Axios

Educators, students and parents are heading into another year of generative AI trial and error in the classroom.

Why it matters: Murky policies risk widening inequities and undermining trust, even as most educators agree that AI is here to stay.

By the numbers: The Trump administration's push to expand AI in schools, coupled with an ed tech gold rush, has districts revising rules in real time.

- 89% of high school and college students say they use AI technology for school, according to recent polling from learning platform Quizlet. That's up from 77% in 2024.

- The average teacher is using around 150 different ed tech tools in a given school year, Arman Jaffer, founder of classroom AI assistant Brisk Teaching, told Axios.

Between the lines: This is the fourth school year that students have had access to ChatGPT on personal devices, but many districts still don't allow it on school devices.

- OpenAI's policies forbid those under 13 from using ChatGPT and require users 13 to 18 to get parent or guardian permission. (Anthropic's Claude chatbot, which is popular among college students and educators, requires users to be 18 or older.)

- Regardless of policies and rules, there's no way to stop kids from using ChatGPT on their personal devices, many educators told Axios.

The big picture: Parents and students still aren't sure whether using AI tools counts as cheating or digital literacy.

- It can be both, says David Touretzky, a Carnegie Mellon computer science professor and founder of AI4K12, an initiative to develop national guidelines for AI education.

- "Schools that are already stressed want to ban ChatGPT because they don't know how to cope with it right now," Touretzky told Axios in an email.

- Districts with more resources can experiment with new tools — but some students everywhere will still try to cheat if they can get away with it, Touretzky said.

The intrigue: Sal Khan, founder of Khan Academy and the AI tutor and teaching assistant Khanmigo, told Axios that teachers falsely accusing students of cheating is a growing problem.

- Khan said a family member was writing assignments and then using AI to introduce errors into their work so that their teacher's AI detector wouldn't flag it as AI-generated.

Reality check: Rachel Yurk, chief information and technology officer at the Pewaukee School District in Wisconsin, told Axios her district blocks AI tools on all school-owned student devices, but it's not pretending the tools don't exist.

- Since 2022 the district has been teaching students about chatbots — including bias, privacy, accuracy, emotional dependency and other problems with generative AI.

What they're saying: Educators know that students are using chatbots to complete homework. "We're not putting our heads in the sand," Katherine Goyette, computer science coordinator at the California Department of Education, told Axios.

- Calling it cheating, Yurk says, is misunderstanding the problem that her district is trying to solve through conversations about responsible use and academic integrity.

- Cheating is taking someone else's work and calling it your own, Yurk says, which gets complicated when you're talking about AI: "There's no person behind it, right?"

- If you're not watching a student do the work, Khan says, you should assume AI is involved.

The solution for many schools will be to change the way teachers assess student proficiency, opting for more in-class writing assignments, oral assessments, class discussions and group work.

- These techniques aren't new, Clay Shirky wrote in a recent New York Times op-ed: "They are simply a return to an older, more relational model of higher education."

- "Educators at all levels are having to rethink how we should create assignments and how we can accurately measure student learning," Touretzky says. "We'll figure it out eventually," but "banning LLMs is not a realistic solution."

What we're watching: Administrators say that the tech is moving too fast to cement rules into place.

- Ednovate Schools, a network of independent public charter schools in California, focuses on innovation and technology and has been honing its AI policy for years, Lanira Murphy, Ednovate's senior director of academics, tells Axios.

- Murphy says the AI policy is flexible, focusing on conversation and learning rather than strict punishment for misusing tools.

- The worst approach, Murphy says, is to have no policy at all. "If my child uses ChatGPT and you didn't have a policy, but then you punish them, we have a problem."