AI's promised nirvana is always a few years off

Add Axios as your preferred source to

see more of our stories on Google.

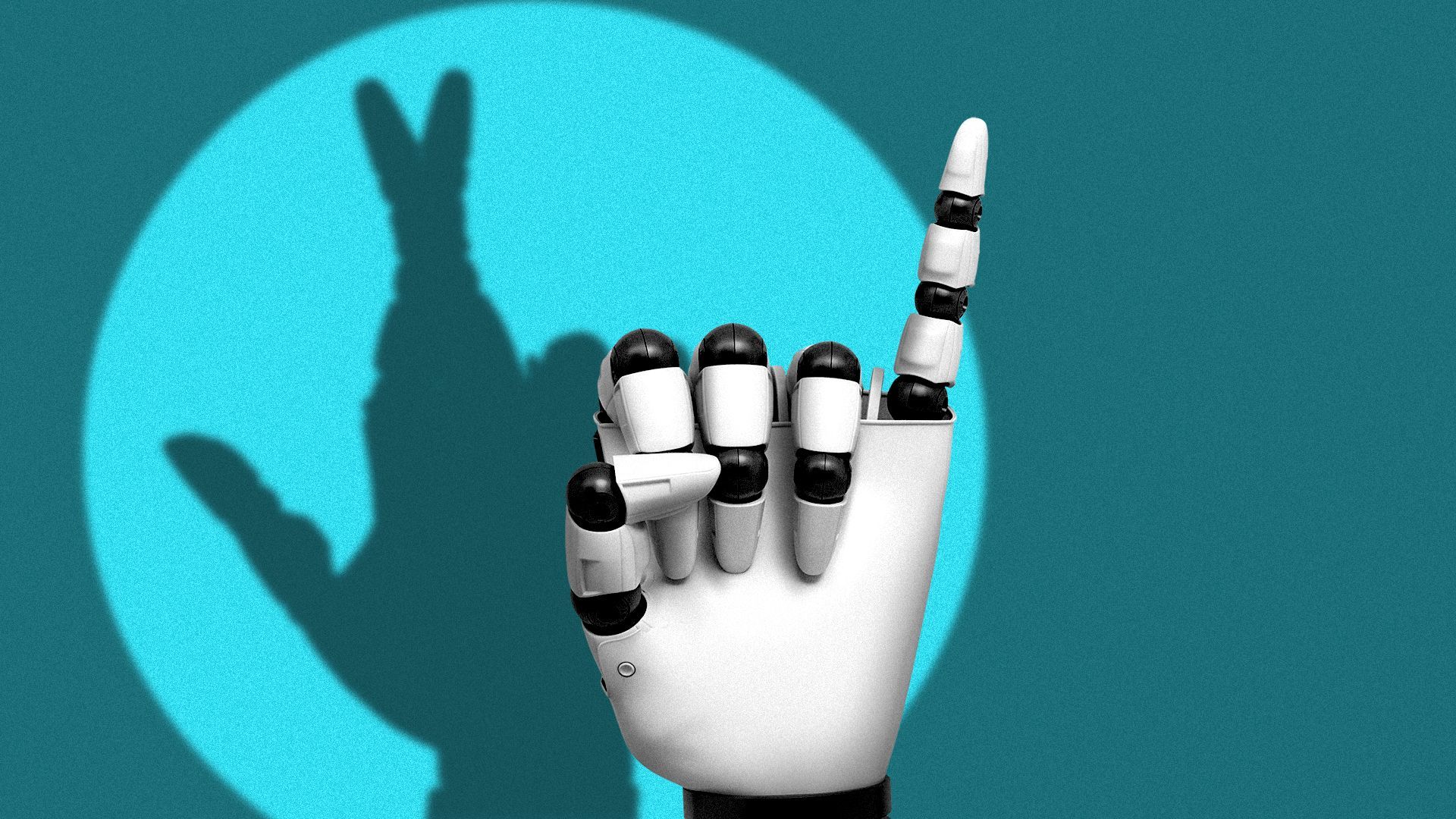

Illustration: Lindsey Bailey/Axios

Two years ago, when ChatGPT arrived, tech leaders suggested that the next few years would bring incredible advances in AI. Today, they're saying the same thing.

Why it matters: While the AI industry — powered by enormous investments and competition — has made steady strides, it also keeps promising vast breakthroughs right around a corner it never seems to turn.

Driving the news: Silicon Valley's AI fever has spiked recently with a series of optimistic new predictions of the imminent arrival of artificial general intelligence (AGI), or human-level AI.

- AGI — defined as "a system that's capable of exhibiting all the cognitive capabilities humans can" — is "probably a handful of years away," Google DeepMind CEO Demis Hassabis said last month on the Big Technology podcast.

- "Over the next two or three years, I am relatively confident that we are indeed going to see models that show up in the workplace, that consumers use — that are, yes, assistants to humans but that gradually get better than us at almost everything," Anthropic CEO Dario Amodei said in a Wall Street Journal interview at Davos.

- Around the same time, OpenAI CEO Sam Altman wrote, "We are now confident we know how to build AGI as we have traditionally understood it."

- According to a New York Times report, Altman also told then President-elect Trump, shortly before his inauguration, that the industry would deliver AGI sometime during Trump's new administration — i.e., in less than four years.

The big picture: In the more than two years since ChatGPT's debut on Nov. 30, 2022, researchers have endowed large language models (LLMs) with new multimodal capabilities encompassing audio, images and video.

- They've also extended models' capacity to "reason," or think through solutions to problems in a more methodical and reflective way.

Yes, but: Huge hurdles to AGI remain in other realms where progress over the last two years looks far less steady.

- AI systems still regularly fail at common-sense tasks like counting the number of repeat letters in long words or understanding the passage of time.

- They also still make things up — which, of course, people do as well, but people, unlike LLMs, generally know when they are fibbing or "confabulating."

Embarrassingly, these errors still keep turning up even in spotlight situations like Google's Super Bowl ad for Gemini, which originally featured an AI answer incorrectly stating the global market share of Gouda cheese.

- Google said the problem wasn't AI "hallucination" but rather incorrect information on the web, per The Verge. Either way, the AI answer was wrong.

No one knows whether these problems can be fixed by throwing more processing power and better algorithms at them — the "scaling up" strategy that Altman and most of the industry embrace. Perhaps entirely new architectures and techniques will be required, as some high-profile industry critics believe.

Another wrinkle is that definitions of AGI vary from company to company.

- Much of the industry has coalesced around the idea that AGI means "AI that can match human capacity," whereas superintelligent AI means "AI that exceeds human capacities across the board." But you can't be sure everyone using these terms agrees.

The bottom line: "Coming in just a few years" makes a convenient AGI timeline because it's close enough to be tantalizing but far enough out that, in a couple of years, most people will have forgotten your original promise, and you can make it again.