Deepfakes are easy to make, but also easy to detect

Add Axios as your preferred source to

see more of our stories on Google.

Illustration: Aïda Amer/Axios

Generating a plausible deepfake video that swapped my face for Meghan Markle's took about 10 seconds and showed how easy it would be to pretend to be someone else in a live video.

Why it matters: The deepfake experiment on the sidelines of the DEF CON hacker conference also came with a silver lining: Technology is getting better at detecting deepfakes, too.

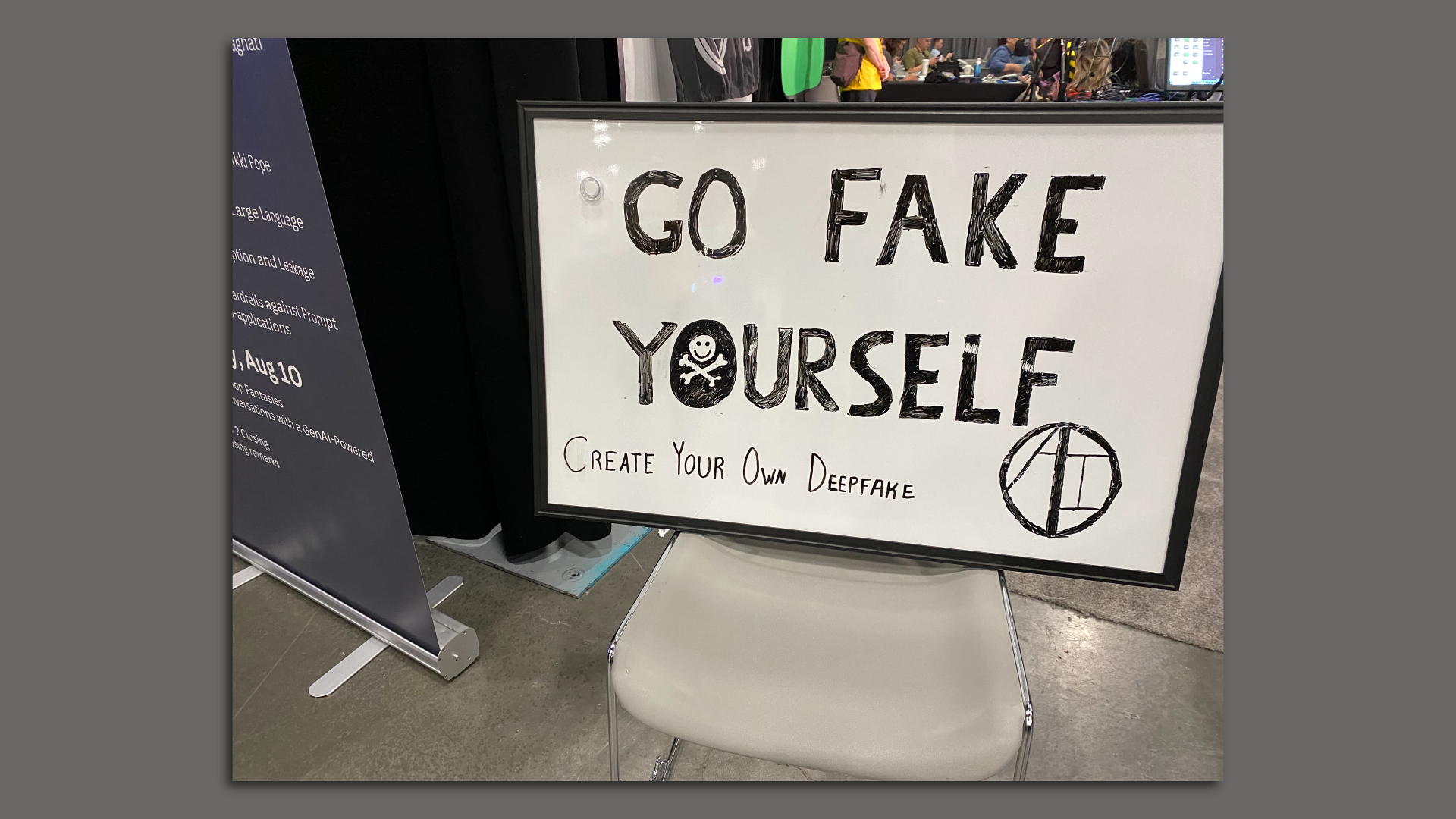

Participants in the DEF CON hacker conference over the weekend had the opportunity to create a live video deepfake in a lab held by AI Village in partnership with the Defense Advanced Research Projects Agency (DARPA).

Zoom in: The DEF CON experiment tested both the creation and detection of deepfakes.

- The AI Village worked with Brandon Kovacs, a senior red team consultant at cybersecurity services provider Bishop Fox.

- The team used an open-source tool called DeepFaceLive to generate participants' celebrity dupes in real time.

- DARPA-backed Semantic Forensics (SemaFor) then ran its own deepfake detection tool — trained on years' worth of research from DARPA and several academic research partners — to see if it could spot the fakes.

How it works: For my face-swapped deepfake, I stood in front of a green screen and waited a few seconds before the AI model put Markle's face over my face.

- Some participants were also made to look like Keanu Reeves, Johnny Depp, and others. Kovacs also had the ability to make my voice sound like someone else's.

- Kovacs then recorded a 10-second video of me moving my head and waving my arms, featuring Markle's face instead of my own.

- After, I waited a few minutes while SemaFor ran two checks on my video: One that parses the video frame by frame to check for any anomalies in the metadata and other video analytics; another that checked my face for any signs of manipulation.

- Luckily, my Meghan Markle impression was flagged as a fake.

- "What's interesting is that with our eyes, it was not very detectable that it wasn't your natural face," Nathan Schurr, a volunteer who helped run the deepfake lab, told Axios.

Yes, but: Organizers asked me to remove my glasses to film the video since their model wasn't specifically designed to cut around them.

The intrigue: SemaFor's tool provides a score between -5 and +5 when analyzing videos, where a positive outcome likely means it's a fake.

- Mine received scores of 0.66 and a 0.26 in the two scans — barely making the cut.

- But part of that is because SemaFor's tools didn't have access to their cloud server from the DEF CON floor and was relying on less data than usual, Schurr said.

Between the lines: Unlike some other deepfake generators, this experiment highlighted just how easy it could be for scammers to pretend to be someone else in a live video call.

Threat level: North Korean IT workers and other scammers are already using these tools to trick employers into hiring them.

- Earlier this year, a scammer used similar deepfake tools to pretend to be a multinational firm's CFO on a video call and tricked a legitimate finance worker at the company into sending a $25 million payment.

- The Department of Justice recently arrested a Nashville resident who allegedly helped North Korean workers steal identities and get hired by American and British companies.

What's next: Wil Corvey, the DARPA program manager overseeing the SemaFor project, told Axios on the sidelines of DEF CON that UL Research Institutes has expressed interest in taking over as it wraps up its DARPA funding cycle.

The bottom line: Corvey is bullish that detection tools are keeping up with the pace of AI deepfake creations — and that the nightmare that many fear might not come.

- "We're headed towards a really complicated future," Corvey said.

- "But I think that we're in communities that recognize that and are really taking action in concrete ways at addressing any concerns that we have."

Go deeper: Deepfakes' parody loophole

Editor's note: This story was corrected to reflect that the Bishop Fox team used the open-source tool (but did not create it) and that the lab was held by AI Village in partnership with DARPA (it was not sponsored by DARPA).