A dark view of the future of autonomous weapons

Add Axios as your preferred source to

see more of our stories on Google.

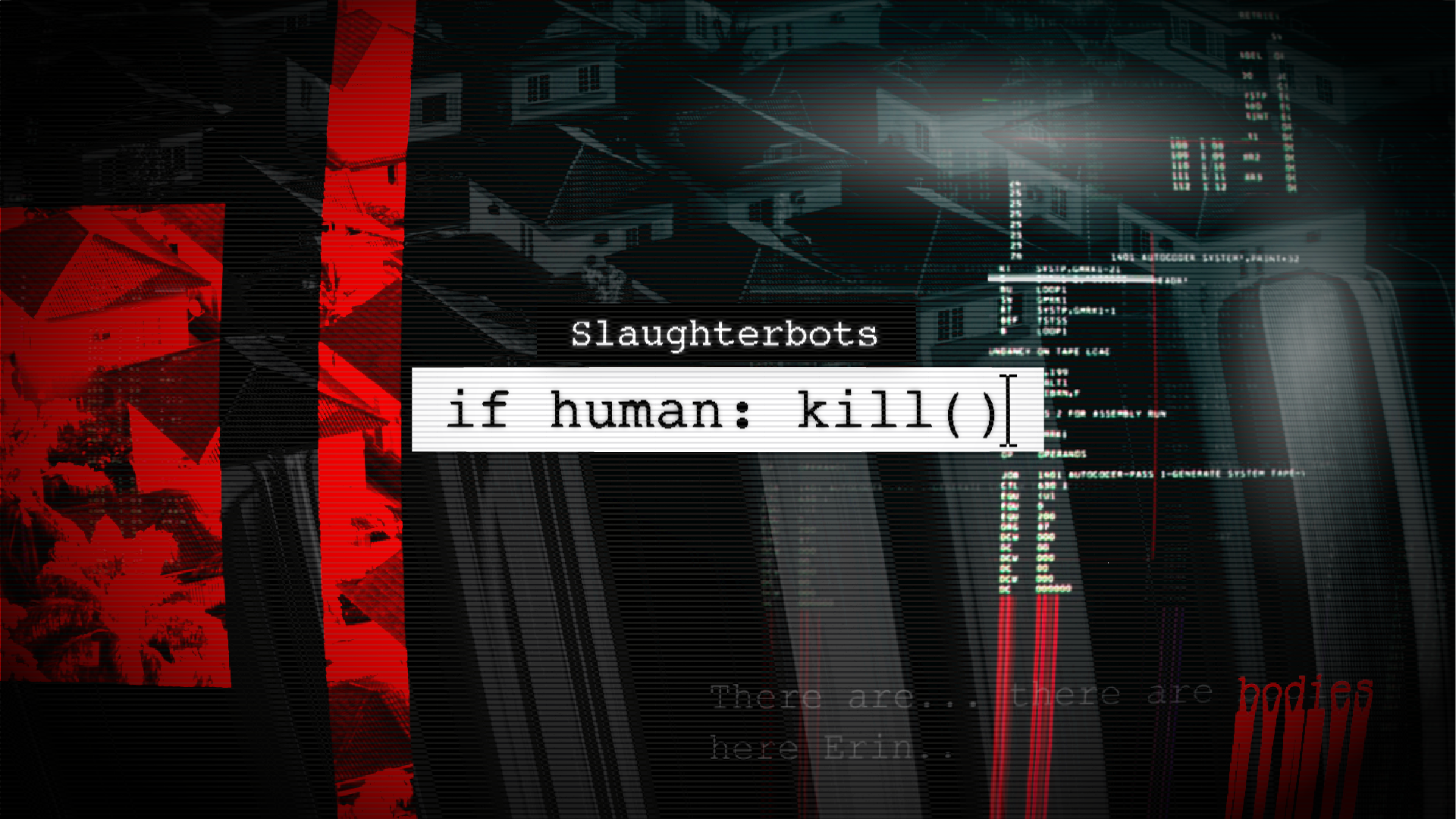

A still from the video "If Human: Kill ( )." Image: Future of Life Institute

A new short film warns of the coming risks posed by the development and proliferation of lethal autonomous weapons.

Why it matters: Drones with the ability to autonomously target and kill without the assistance of a human operator are reportedly already being used on battlefields, and time is running out to craft a global ban of what could be a destabilizing and terrifying new class of weapon.

What's happening: The Future of Life Institute (FLI), a nonprofit focused on existential risks from technology, today released "If Human: Kill ( )," a video that depicts what the future could be like if lethal autonomous weapons go unregulated.

- In a word: horrific. The film splices fictional news clips to show drones and robots armed with automatic weapons using facial recognition to identify and kill political protesters and police, aid bank robberies, and assassinate scientists.

Flashback: The new film is a sequel to a 2017 video by FLI that gave a name for these autonomous weapons: "slaughterbots."

- While many of the concerns about autonomous weapons focus on the possibility they could turbocharge warfare between states, or even go rogue "Terminator"-style, the FLI videos imagine a future where AI weapons fall into the hands of criminals and terrorists who can use them to wreak havoc.

- Instead of Skynet, think self-controlled and self-targeting AK-47s, a weapon that has already killed millions of people around the world.

What they're saying: "The weapons we portray in the film are mainly going to be used by civilians on civilians," says Max Tegmark, co-founder of FLI and an AI researcher at MIT. "And I'm so worried about this precisely because they're so small and cheap that they can proliferate."

What to watch: Later this month, a UN conference in Geneva will discuss whether to create a new international treaty banning weapons systems that can select and engage targets without "meaningful human control," as groups like FLI and the International Committee of the Red Cross have called for.

The bottom line: "Superpowers should realize that it's not in their interest to allow AI weapons of mass destruction that everyone would be able to afford," says Tegmark.

Editor's note: This story was first published on Dec. 1.