New device translates brain activity into speech, but with limits

Add Axios as your preferred source to

see more of our stories on Google.

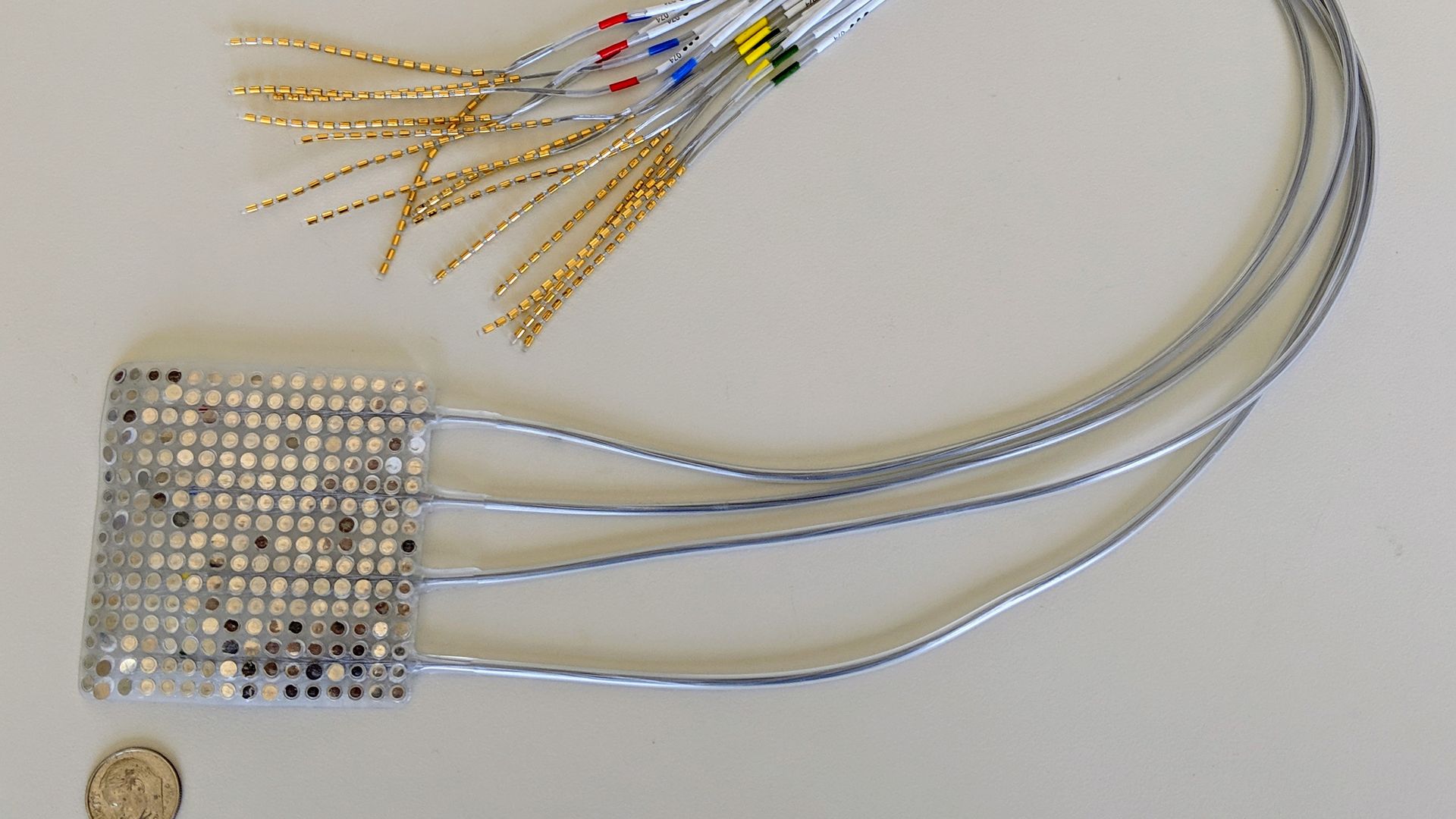

The type of intracranial electrodes used to record brain activity in this study. Photo: UCSF

Scientists have developed a tool that decodes brain signals for the speech-related movements of the jaw, larynx, lips and tongue and synthesizes the signals into computerized speech, according to a small study published in Nature on Wednesday.

Why it matters: Researchers want to help people who've lost their ability to speak, with many current methods translating eye or facial muscle movements into letter-by-letter spelling — but this takes a long time and doesn't sound like fluid speech. This study shows the early stages of a new and faster way of "speaking" in full sentences but also comes with its own caveats, like the need to place electrodes directly on the brain.

The backdrop: Humans' natural speech averages around 120–150 words per minute.

- Typing controlled by the eyes or cheek — such as the one used by Stephen Hawking — can translate an average of 10 words/minute.

- Brain-computer interfaces (BCIs) for communications can be non-invasive, often using EEG or MEG imaging devices to measure electrical activity and AI to translate them.

Non-invasive tools have captured the imagination, but haven't seen much success yet.

- You may have heard of Facebook working on a machine to read your brain and others including Google that believe "brain uploading" will be here in decades.

- Elon Musk — who described how his neuroscience company Neuralink will merge humans with machines on "Axios on HBO" — tweeted earlier this week that their BCI is "coming soon."

- Alter Ego, a research project described at TED2019, allows users wearing a special facial patch to silently speak a command or query and have a computer silently talk back to the user via bone conduction, Axios' Ina Fried reports.

Invasive BCIs, such as those that surgically place recording electrodes or insert microelectrodes in the cerebral cortex of the brain, have shown greater promise for speech clarity, at least so far. Some recent advances include...

- A small study published in January in Scientific Reports offering a proof-of-concept for an AI system translating simple thoughts into sometimes-recognizable speech.

- A November study in the Journal of Neuroscience found that the code used in brain motor areas corresponds to speech movements, also called "speech gestures," not whole sounds. So, instead of coding the sound "b," the brain codes "close your lips [and] vibrate your vocal folds," one of the study authors, Matt Goldrick, tells Axios.

"Currently, in order to get the required signal resolution, BCIs need to be implanted. There is a group at Facebook actively working on non-invasive BCIs. ... That seems far-fetched to me. To get the resolution you need for speech, we currently need implanted BCIs."— Matt Goldrick, professor and chair, Northwestern University's linguistics department

What they did: The Nature study authors held a press briefing to discuss their invasive BCI...

- They selected 5 patients undergoing epilepsy surgery and placed a grid of electrodes (called electrocorticography) on the brain surface, focusing on the regions of the brain responsible for vocal tract movement.

- The patients read aloud hundreds of sentences.

- Simultaneously, those brain signals were sent to the AI program that decodes the vocal-tract movements articulators and another that synthesizes speech and "speaks" the words.

- 1,755 English-speaking volunteers listened to blocks of the AI-generated words to gauge how well the virtual vocal cord translation went.

- They also tested the system on the patients miming the words (instead of vocalizing directly).

What they found:

- For sentences that were vocalized, they found about 70% of words are correctly transcribed, says study author Josh Chartier, a UC San Francisco bioengineering graduate student.

- Speech synthesis was also possible when volunteers mimed sentences — but showed less accuracy.

- "This is an exhilarating proof of principle that with technology that is already within reach, we should be able to build a device that is clinically viable in patients with speech loss," says study author Edward Chang, professor of neurological surgery and member of the UCSF Weill Institute for Neuroscience.

What they're saying: Advances in this area are making exciting progress but remain in the very early stages, experts say.

- "[These] results provide a compelling proof of concept for a speech-synthesis BCI, both in terms of the accuracy of audio reconstruction and in the ability of listeners to classify the words and sentences produced. However, many challenges remain," write Chethan Pandarinath and Yahia Ali in an accompanying News and Perspective piece.

- "An important limitation of this work is that the neural codes are specific to each person. This algorithm learns to decode [using] examples of the person speaking while their brain is recorded. But if a person came into the lab, already paralyzed and unable to speak, there would be nothing for the algorithm to learn from. This is the biggest challenge the field faces," Goldrick says.

- But, the National Institutes of Health says in a press release: "By breaking down the problem of speech synthesis into two parts, the researchers appear to have made it easier to apply their findings to multiple individuals. The second step specifically, which translates vocal tract maps into synthetic sounds, appears to be generalizable across patients." NIH partly funded this study via its Brain Initiative.

What's next: Chang says next steps include making the technology more natural and targeting patients who are unable to speak to see if the same algorithms work.

- "This will require us to develop better technology for sampling brain signals, more powerful algorithms, and a deeper understanding of how the brain stores and processes speech," says Goldrick, who was not part of this study.

Go deeper: Watch UCSF's video here or listen to 2 samples here of a research participant reading a sentence, followed by the synthesized version of the sentence generated from their brain activity.