The AI crossroads: Dystopia vs. utopia

Add Axios as your preferred source to

see more of our stories on Google.

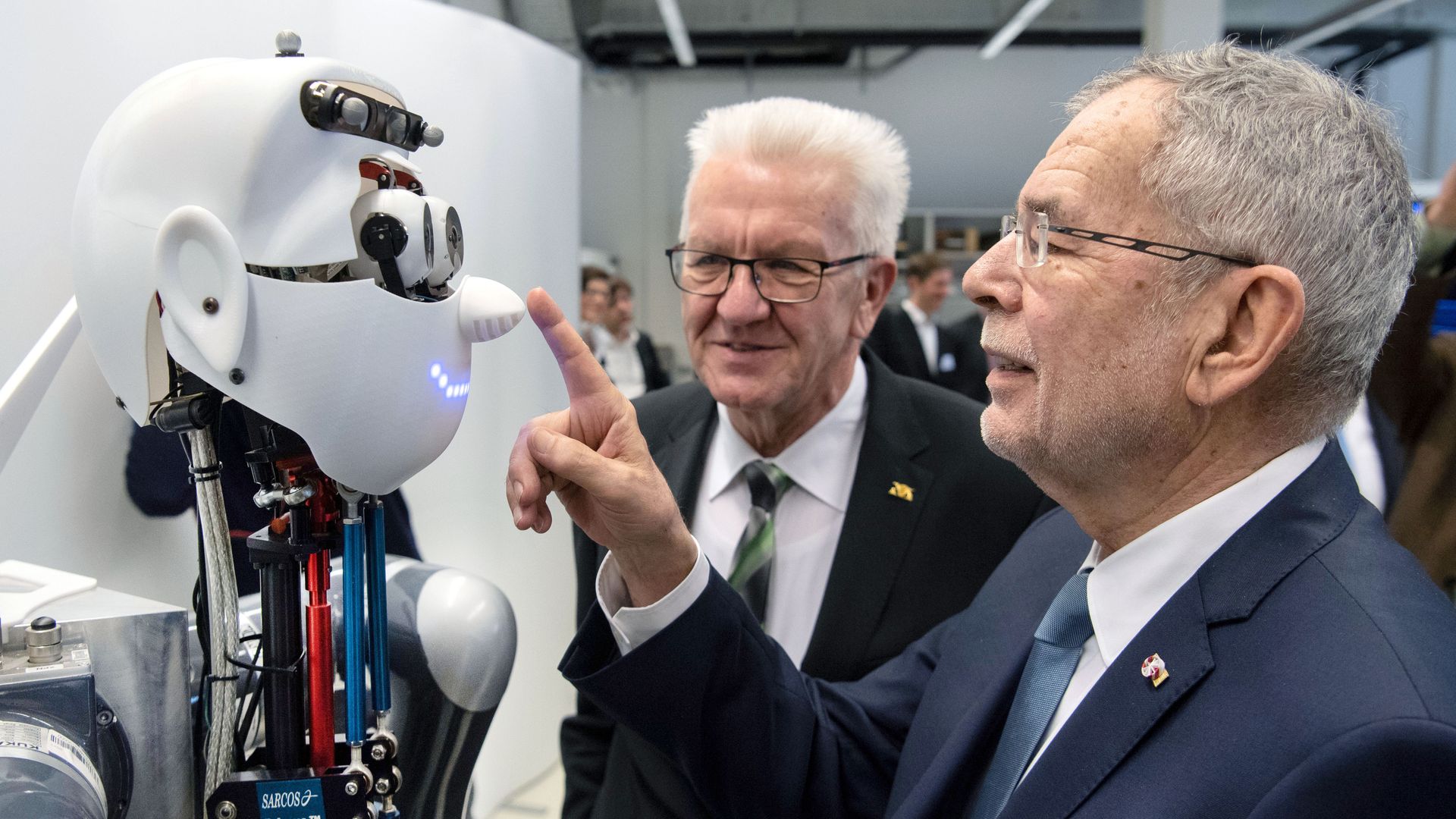

The president of Austria boops a robot on the nose. Photo: Marjian Murat/AFP via Getty Images

While researchers and business leaders barrel ahead to invent and apply artificial intelligence, a small, vocal minority has been sounding the alarm, urging the field to temper the technology’s dangers before widely deploying it.

Driving the news: In a new Pew survey of nearly 1,000 tech experts, fewer than two-thirds expect technology to make most people better off in 2030 than today. And many express a fundamental concern that AI will specifically be harmful.

What they're saying: Experts argue AI may make unfair decisions, displace an enormous number of human workers and lead to geopolitical upheaval.

- “Algorithms aren’t neutral; they replicate and reinforce bias and misinformation. They can be opaque. And the technology and means to use them rest in the hands of a select few organizations,” says Susan Etlinger, an analyst at Altimeter Group.

- “We need to address a difficult truth that few are willing to utter aloud: AI will eventually cause a large number of people to be permanently out of work,” says Amy Webb, founder of the Future Today Institute.

- “This will further destabilize Europe and the U.S., and I expect that, in panic, we will see AI be used in harmful ways in light of other geopolitical crises,” says danah boyd, a Microsoft researcher and founder of the Data & Society research institute.

- Some of those surveyed also argue that people may abdicate an essential element of our humanity and start to accept whatever AI tells us: "We won’t be more autonomous; we will be more automated as we follow the metaphorical GPS line through daily interactions," said Baratunde Thurston, a futurist.

The other side: These dark futures were balanced by experts saying dystopia is not inevitable.

- “What worries me most is worry itself: an emerging moral panic that will cut off the benefits of this technology for fear of what could be done with it," says Jeff Jarvis, director of the Tow-Knight Center at the City University of New York.

- "The right question is not ‘What will happen?’ but ‘What will we choose to do?’" says Erik Brynjolfsson, director of the MIT Initiative on the Digital Economy.