What we're reading: The new arms race over AI-specific chips

Add Axios as your preferred source to

see more of our stories on Google.

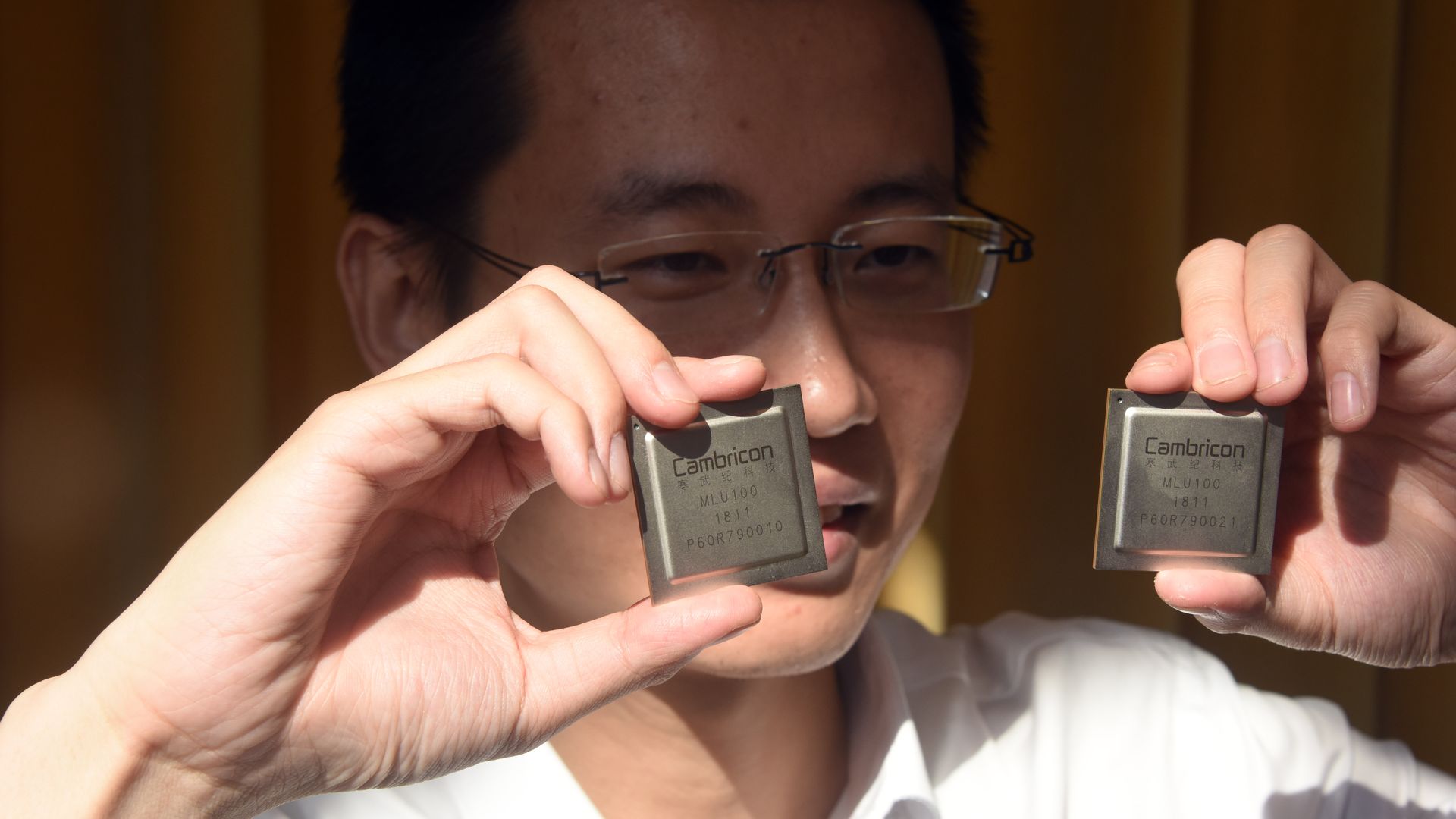

Cambricon Technology CEO Chen Tianshi holds up his company's AI processors. Photo: Sun Zifa/China News Service/VCG via Getty Images

After decades of dominance by a few key players, the semiconductor race has been blown wide open. The shift is driven partly by a new demand for AI-specific chips, according to a report from Andy Patrizio in Ars Technica.

Why it matters: There's a lot of new money pouring into a lucrative sector, setting up an explosion of new players — and another venue for the AI arms race between the US and China.

The backdrop: Until fairly recently, the desktop and mobile semiconductor markets were unipolar, ruled by Intel and ARM, respectively. Several changes blew the lid off the industry, Ars reports, but the rise of computing-intensive AI has led to the biggest proliferation of new technologies from both established companies and new players.

The old guard:

- IBM released an AI processor last year that plays nice with hardware from Nvidia, a company whose GPUs have long been popular in machine-learning circles, along with its newer AI-specific processors.

- Intel has bought up companies to speed its AI chip development, and offers a whole family of AI chips for various uses.

- Two processors from ARM are specifically designed for image recognition.

The upstarts:

- Tech companies not known for semiconductors — Google, Microsoft, Amazon, Apple, Facebook, and even Tesla — are building AI-specific processors to use in their own hardware.

- Dozens of startups are getting into the game, according to NYT.

- China's big three tech companies — Baidu, Alibaba, and Tencent — have released or are developing similar tech. Baidu announced an AI-specific processor called Kunlun last week, while Alibaba and Tencent are deploying AI processing capabilities in their cloud platforms.

People see a gold rush; there’s no doubt.— Brad McCredie, VP of IBM Power systems development, to Ars Technica

Why now? Specialized tasks like machine learning benefit from tailored chips that do one thing well — and efficiently — rather than many things more slowly, Patrizio writes. Different types of machine learning benefit from different chip architectures, opening up a whole range of new needs.

What's next: Many of the chips being developed will never see the light of day, either because they're being made for companies' internal use, or because they'll quickly be bulldozed by the considerable competition. But there's a hefty prize in store for the winners.

Go deeper: Nerd out on the gory details at Ars Technica

More from Axios: