The most important technology for self-driving cars

Add Axios as your preferred source to

see more of our stories on Google.

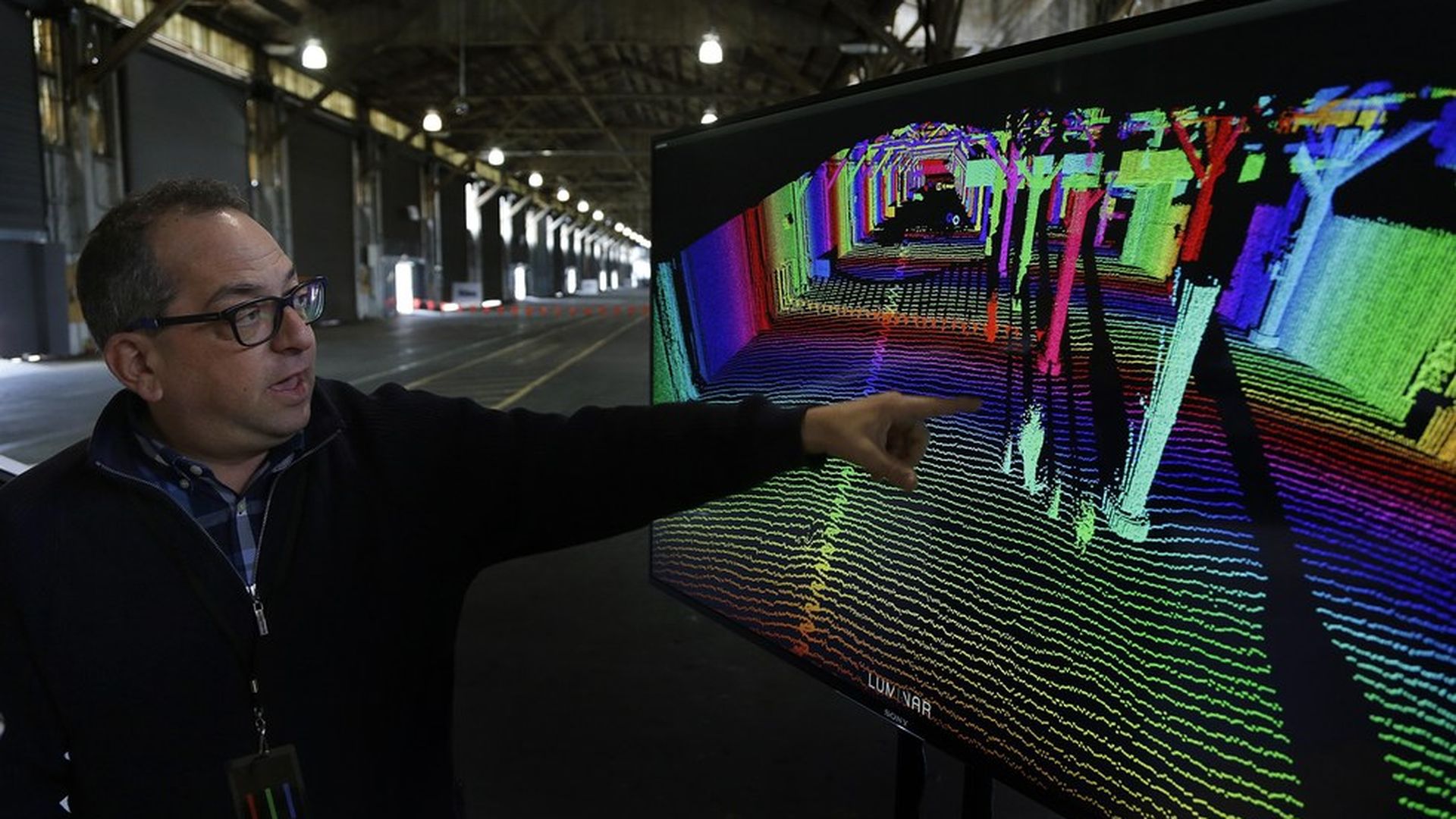

Ben Margot / AP

The battle for self-driving cars is often framed as a fight between Silicon Valley and Detroit, or Google and Uber, but a more fundamental question is what type of technology will win the day.

Alphabet's Waymo, Uber, and automakers like Ford and General Motors are investing heavily in technology known as LiDAR (or Light Detection and Ranging) sensors. But some — including startup Comma.ai and Tesla— don't think LiDAR is necessary at all.

Why it matters: Figuring out self-driving car sensors is one the hottest treasure hunts in Silicon Valley right now. Whoever perfects the software-sensor combination will have an enormous advantage in the future of transportation. The technology is also at the heart of an on-going lawsuit between Uber and Waymo— the outcome of which could be a make-or-break moment for Uber.

Why LiDAR: LiDAR's function in a self-driving car is to act as the vehicle's "eyes." LiDAR works by shooting eye-safe laser beams all around, then mapping out distance and shape based on how fast the light bounces back off objects. This way, as part of a self-driving car, it allows the vehicle to continuously track the objects, people, and landscape around it.

LiDAR works quite well for low-speed, high density driving (like in a city), Stanford lecturer Reilly Brannan tells Axios. But it isn't very useful in high-speed, low density environments (like highways), he adds. (For more, see this long BuzzFeed explainer of LiDAR.) And it is expensive — LiDAR sensors currently cost tens of thousands of dollars.

Alternative view: George Hotz, founder of self-driving car startup Comma.ai, doesn't think LiDAR is the silver bullet. According to Hotz, LiDAR was a good solution years ago when companies were building self-driving cars and other technologies, especially deep learning, weren't advanced enough to do the job.

"Look at humans driving cars today—they don't have LiDAR," said Hotz, who previously made headlines as the first to jailbreak an iPhone. "And how are they doing? Pretty good, right?"

Instead of LiDAR, Commai.ai is using cameras—smartphone cameras—combined with software, to achieve the same thing. While cameras are capturing visual information about the road, Comma.ai's software, using deep learning (an artificial intelligence technique), analyzes the footage to pick out objects, people, and cars. Hotz's main point is that cameras today are good enough to see what human eyes see. The rest is a question of software. Other arguments from Hotz:

- Brake/traffic lights: LiDAR can't really detect brake lights, for example, which emit light at a different frequency than the LiDAR's lasers. Cameras, on the other hand, can.

- Computer vision has significantly improved: Modern deep learning for a lot of visual applications is "about at human vision level," according to Hotz, though he adds that it's a relatively new development—since about 2012. Commai.ai uses a technique called segmentation networks to analyze images.

- What about night time vision? "Can you see at night?" Hotz responds, implying that if humans can do it, so can cameras. He adds that cameras have better low-light performance than the human eye. (Not everyone agrees on that point, however—some maintain that LiDAR is better suited for this.)

The Tesla way: Tesla, whose Autopilot feature is currently mostly an assistant to drivers but will eventually evolve into more advanced autonomous driving, also doesn't use LiDAR. It uses a combination of cameras, radar, sonar, GPS and software. "Once you solve cameras, or vision, then autonomy is solved. If you don't solve vision, it's not solved," Tesla's Elon Musk said at last month's TED Conference.

Self-driving car companies using LiDAR are also using other sensors like cameras and radar. This also doesn't necessarily mean that companies using LiDAR are on the wrong track. Oliver Cameron, a VP for the self-driving unit for Udacity, the Silicon Valley training school for self-driving engineers, says the time lines are simply different. LiDAR will take three or four years before it can be produced at scale at far-lower prices.

Steve LeVine also contributed reporting to this story.