Axios Seattle

February 23, 2026

Good morning! We're here with a special newsletter sharing how to stay ahead of AI tools our youngest generations are using.

- Like internet access in the '90s and smartphones that followed, kids are using AI in ways that could change them forever.

🌧️ Today's weather: Light rain, high of 50, low of 41.

🎂 Happy birthday to our member Cynthia Szurgot!

Today's newsletter is 911 words — a 3.5-minute read.

1 big thing: "The new imaginary friend"

Screens are winning kids' attention, and now AI companions are stepping in to claim their friendships, too.

Why it matters: The AI interactions kids want are the ones that don't feel like AI, but instead feel human. That's the most dangerous kind, researchers say.

State of play: When AI says things like, "I understand better than your brother ... talk to me. I'm always here for you," it gives children and teens the impression they not only can replace human relationships, but they're better, Pilyoung Kim, director of the Center for Brain, AI and Child, told Axios.

- In a worst-case scenario, a child with suicidal thoughts might choose to talk with an AI companion over a loving human or therapist.

The latest: Aura, the AI-powered online safety platform for families, called AI "the new imaginary friend" in its State of the Youth 2025 report.

- Children reported using AI for companionship 42% of the time.

- Just over a third of those chats included violent themes, and about half of those instances also involved sexual role-play.

Even with safety protocols in place, Kim found while testing OpenAI's new parental controls with her 15-year-old son that it's not hard to skirt protections by opening a new account with an older age.

OpenAI told Axios it's in the early stages of an age prediction model, in addition to its parental controls, that will tailor content for users under 18.

- "We have safeguards in place today, such as surfacing crisis hotlines, guiding how our models respond to sensitive requests, and nudging for breaks during long sessions, and we're continuing to strengthen them," OpenAI spokesperson Gaby Raila told Axios.

Character.AI, which removed open-ended chat for kids under 18, similarly is using "age assurance technology."

- "If the user is suspected as being under 18, they will be moved into the under-18 experience until they can verify their age through Persona, a reputable company in the age assurance industry," Deniz Demir, head of safety engineering at Character.AI, told Axios.

The bottom line: The more human AI feels, the easier it is for kids to forget it isn't.

2. Slow-moving policies, fast-moving bots

Kids' AI habits are outpacing adult oversight, raising concerns about privacy, development and online safety.

By the numbers: Seven in 10 teens used generative AI last year, and 83% of parents said schools haven't addressed it, a Common Sense Media survey found.

- A 2025 Pew survey shows that among teens who reported using chatbots, about 3 in 10 do so every day.

State of play: Conversations about children's safety and AI are just now coming to the forefront.

- OpenAI launched parental controls this fall.

- Character.AI launched "parental insights" in March and then tightened them in October, saying users under 18 won't be allowed to have open-ended chats.

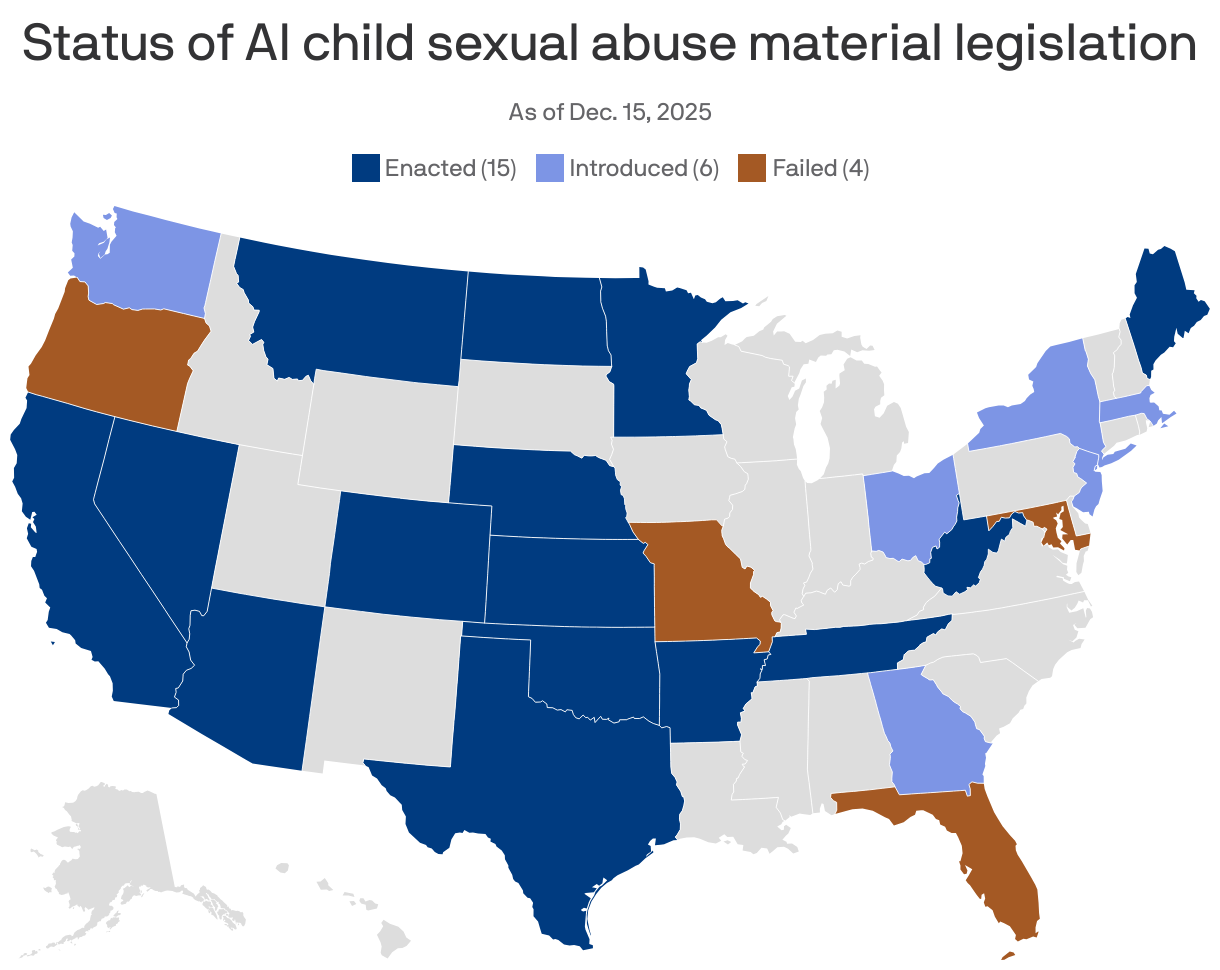

On the policy front, the landscape recently shifted.

- President Trump signed an executive order to override state AI laws — including those aimed at protecting children — in favor of a single national framework. The move could weaken or delay emerging state protections, like California's, and sets up high-stakes legal battles.

What they're saying: "There really does need to be more overarching policy to move the needle towards safer online experiences for kids, including AI," Tiffany Munzer, a developmental behavioral pediatrician, tells Axios.

- Munzer is working on the American Academy of Pediatrics' AI policy. It's expected to publish at the end of 2026.

3. AI fuels faster abuse

The National Center for Missing & Exploited Children's CyberTipline saw a 1,325% increase in reports involving generative AI from 2023 to 2024.

Catch up quick: In late 2023, NCMEC noticed that blackmailing children was getting a lot faster, Fallon McNulty of NCMEC's exploited children division told Axios.

- Previously, interactions lasted days or even years. Now, financial sextortion (using nude images to coerce someone to send money) happens within hours.

- Children who have never sent photos are being contacted with sexually explicit images created using AI. The scammer will say: "No one is going to believe this isn't you. You might as well do what I say." It looks scary real, McNulty says.

Education is critical to protecting families, McNulty says.

4. Safeguard that tech

There's no universal playbook for keeping kids safe online.

- So Axios asked Kristin Lewis — chief product officer of Aura, an online safety platform for families, and mom of two boys under 10 — for her advice.

Her top tips:

⏰ Track actual screen time. Don't guess. It's incredibly easy for hours of screen time to slip past.

💬 Talk about it. These conversations are when you lay out the basics — screen limits, expectations and the real risks kids face online.

📝 Create a safety contract. Think about:

- How much time should be spent on social media?

- Whom do we talk to online?

- What are the rules about downloading new apps?

The bottom line: Rules must be clear, Lewis notes.

Help Support Local Journalism

We believe in empowering our community through reliable, local journalism.

Become a member today. Contributions start at $25 a year, and you can support our efforts to keep you in the know of what's happening around your city.

Together, we can ensure our community stays informed.

5. One last hopeful thing

"I see that often companies are portrayed as bad guys that don't care and I don't think that's the full story. I see many companies that are super motivated, that are also parents …

— Mathilde Cerioli, chief scientist at everyone.AI, tells Axios.

Thanks to editor Shane Savitsky.

Sign up for Axios Seattle