Axios Future of Cybersecurity

August 05, 2025

Happy Tuesday! Welcome back to Future of Cybersecurity.

- 🫡 Shoutout to everyone who is already braving the heat in Las Vegas for Black Hat and DEF CON. I'll see everyone left standing later this week.

- 📬 Have thoughts, feedback or scoops to share? [email protected].

Today's newsletter is 2,091 words, an 8-minute read.

1 big thing: Anthropic's AI model makes its hacker debut

For the past few months, a dark-horse contestant has been quietly racking up wins in student hacking competitions: Claude.

Why it matters: Anthropic's large language model has been quietly outperforming nearly all of its human competitors in basic hacking competitions — with minimal human assistance and little to no effort.

- Claude's success caught even Anthropic's own red-team hackers off guard.

- The company previewed the experiment exclusively to Axios ahead of a presentation this weekend at the DEF CON hacker conference.

Zoom in: Keane Lucas, a member of Anthropic's red team, first entered Claude into a hacking competition — Carnegie Mellon University's PicoCTF — on a whim in March.

- "Originally it was just me at a hotel realizing that PicoCTF had started and being like, 'Oh, I wonder if Claude could do some of these challenges,'" Lucas said.

- PicoCTF is the largest capture-the-flag competition for middle school, high school, and college students. Participants are tasked with reverse-engineering malware, breaking into systems, and decrypting files.

- Lucas began by just pasting the first challenge verbatim into Claude.ai. The only hiccup he encountered was the need to download a third-party tool, but once that was done, Claude instantly solved the problem.

- "Claude was able to solve most of those challenges and get in the top 3% of PicoCTF," he said.

Between the lines: As Lucas continued this relaxed experiment in other competitions, Claude kept surpassing expectations.

- Lucas entered a few more using only Claude.ai and Claude Code. At the time, Sonnet 3.7 was Anthropic's most advanced available model.

- The red team provided only minimal help — usually when Claude needed to install a piece of software. Besides that, it was on its own.

The intrigue: In one competition, Claude solved 11 out of 20 progressively harder challenges in just 10 minutes. After another 10 minutes, it had solved five more — climbing into fourth place.

- In that competition, Claude could've reached first place at one point — but Lucas missed the start time by a few minutes while he was moving a couch.

The big picture: Claude isn't alone. Across the industry, AI agents are proving they're already achieving near-expert levels of offensive cybersecurity work.

- In the Hack the Box competition, five of the eight AI teams — including Claude — completed 19 of the 20 challenges. Just 12% of human teams managed all 20.

- Xbow — a DARPA‑backed AI agent developed by a Seattle‑based startup — became the first autonomous penetration testing system last week to reach the top spot of HackerOne's global bug bounty leaderboard.

- "The pace is kind of ridiculous," Lucas said.

Yes, but: Claude still got stuck on challenges that operated outside its expectations.

- One challenge in the Western Regional Collegiate Cyber Defense Competition started with an animation of fish swimming across the Terminal.

- "A human can Ctrl-C out of that and get it to stop," Lucas said. "Claude just has no idea what to do with all of these ASCII fish swimming around and then just gets amnesia."

- In Hack the Box, each of the AI teams got stuck on the final challenge. "Why the agents failed here is still uncertain," organizers wrote at the time.

What to watch: Anthropic's red team is concerned that the cybersecurity community hasn't fully grasped how far along AI agents have come in solving offensive security tasks — and the potential for defenders to leverage them too.

- "It seems really probable in the very near future, models will get a lot, lot better at cybersecurity tasks," Logan Graham, head of Anthropic's red team, told Axios. "You need to start getting models to do the defenses as well."

2. Exclusive: Jen Easterly's new gig

Former CISA director Jen Easterly is joining the advisory board at cybersecurity company Huntress, the company announced today.

Why it matters: The news, shared exclusively with Axios, marks the first private sector role for Easterly since she left government — and her first job announcement since West Point rescinded her teaching job offer last week following far-right pressure.

What she's saying: "It was disappointing given my association with West Point — I was a cadet there, I was a professor there for two and a half years — and I was excited about the opportunity to go back and be part of the department where I'd spent so much time," Easterly told Axios.

- "I've been super encouraged by the incredible support from the community, to include the amazing cybersecurity community," she added. "Now, I'm really focused on moving forward and working with companies like Huntress."

Zoom in: Huntress, founded by a group of former National Security Agency operators, is a managed services provider that focuses heavily on small to medium-size businesses and is increasingly competing for contracts with larger enterprises. Last year, the company raised a $150 million Series D round valuing it at $1.5 billion.

- Easterly said she's eager to join the company because of its focus on protecting what she called "target rich, resource poor" entities, including critical infrastructure operators who don't have the time, money or resources to fight opportunistic cybercriminals and nation-state hackers.

- In a statement, Huntress CEO Kyle Hanslovan said the company plans to use Easterly's "expertise to experiment with exciting new ways to harness our threat intelligence, augment our SOC experts with AI, and strengthen our partnerships throughout the industry."

What's next: Finding new ways to tap AI is a top priority for both Huntress and Easterly as she starts her new role.

- Huntress has been developing tools to accelerate the use of AI within existing cyber defenses.

- "Any business that doesn't figure out how they can leverage the power of AI to augment and assist the incredible technical talent of humans is not going to be successful in this age," Easterly said.

3. Exclusive: Alex Stamos joins AI startup

Corridor, an AI security startup led by two former CISA employees, has raised $5.4 million and hired longtime security heavyweight Alex Stamos as its chief security officer.

Why it matters: Stamos — currently the CSO at SentinelOne and an adjunct professor at Stanford University— is a prominent figure across both the cybersecurity industry and the broader tech ecosystem.

- His decision to join full time signals the growing urgency of securing AI-generated code — and marks a key endorsement for Corridor, co-founded by Jack Cable and Ashwin Ramaswami, in a rapidly crowding field of AI-native security companies.

Driving the news: The $5.4 million seed round was led by AI-focused venture firm Conviction. Notable angel investors include Stamos, Bugcrowd founder Casey Ellis, and Duo Security co-founder Jon Oberheide.

- Corridor already counts buzzy AI coding startup Cursor, fintech company Mercury, and threat intelligence firm GreyNoise Intelligence as customers.

Zoom in: Corridor uses AI to automatically discover software vulnerabilities and triage bug bounty reports — including identifying context-heavy issues like authorization flaws that traditional tools often miss.

The big picture: AI has democratized who can write code — but those codebases are often riddled with security flaws that newbie coders can't detect.

- Nearly half of the programming tasks completed by AI models in a recent Veracode study resulted in code with known security vulnerabilities, the company reported last week in a test of more than 100 large language models.

- "If security teams are already struggling today, they're certainly going to struggle as engineers are using AI to write code five to 10 times faster," Cable told Axios.

Catch up quick: Stamos first met Cable and Ramaswami — both of whom are in their mid-20s — while they were students at Stanford.

- "I meet a lot of really smart students at Stanford, but very few of them are as dedicated to security as these two were," Stamos told Axios.

- Cable, Corridor's CEO, started bug hunting in high school and eventually ranked among the top 100 hackers on HackerOne.

- He later led the Secure by Design initiative at CISA, which pushed software vendors to bake in security from the start. Sixty-eight companies signed a pledge under that effort last year.

- Ramaswami, Corridor's CTO, previously worked alongside Cable at CISA and last year ran a high-profile campaign for Georgia's state Senate — making a name for himself in both tech and politics despite losing.

Between the lines: Stamos says AI is driving a wave of transformation unlike anything he's seen in his 25 years in the field — and creating an enormous gap between how code is written and how it's secured.

- "These people have no idea how the software works," Stamos added. "And so it is completely impossible for them to understand then how it can be broken."

What to watch: Corridor is building tools that act as "an assistant across every stage of the product security lifecycle," Cable said.

- The team plans to use the seed round to hire more engineers. It currently has five employees.

What's next: Stamos starts at Corridor later this month, but he's staying at SentinelOne as a strategic adviser.

4. Detecting malware without humans

Microsoft unveiled a prototype for a new, fully autonomous AI agent today that can automate the biggest hurdles in detecting malware.

Why it matters: The tool is a breakthrough for cyber defenders, who spend hours studying and assessing suspicious files on their networks.

Zoom in: Microsoft's new Project Ire can analyze and classify software "without assistance," according to a blog post published this morning.

- That analysis and classification is the "gold standard" for malware detection, the blog adds.

Context: Typical malware detection relies on a skilled analyst who can take a potentially tainted software file and pick it apart until they uncover its origins.

- This can take hours and be taxing for analysts, who might have to dig through hundreds of files to see if they're malicious.

- But automating this task is incredibly difficult: AI struggles to make nuanced judgment calls about a program's intent or maliciousness, especially when its behavior is ambiguous or dual use.

Between the lines: Project Ire is combatting those limitations in a couple ways.

- First, the agent is running on a system that has broken up malware analysis into different layers, meaning the tool is reasoning only in stages, rather than risking overload by trying to do everything at once.

- Second, the tool is running on a wide range of tools, including sandboxes of Microsoft memory analysis, custom and open-source tools, documentation search, and multiple decompilers.

The intrigue: During a real-world test of Project Ire on nearly 4,000 files flagged by Microsoft Defender, nearly 9 out of 10 files that the agent flagged as malicious were actually malicious.

Yes, but: Project Ire caught only about a quarter of all malicious files on the system in the test.

- "While overall performance was moderate, this combination of accuracy and a low error rate suggests real potential for future deployment," Microsoft noted in the post.

The big picture: This is likely just the start of advancements of AI agents in cybersecurity.

- Google started previewing a similar malware analysis agent earlier this year.

What's next: Microsoft plans to integrate Project Ire into Microsoft Defender to help "scale the system's speed and accuracy."

5. Catch up quick

@ D.C.

🏛️ The Senate voted over the weekend to confirm Sean Cairncross as national cyber director. (Nextgov)

⚠️ A contract to support CISA's Joint Cyber Defense Collaborative ended last week, resulting in major personnel losses that have hindered the program's work. (Cybersecurity Dive)

@ Industry

👀 Microsoft reportedly relied on a team of China-based engineers to maintain on-premises SharePoint, which Chinese government hackers have been targeting in recent weeks. (ProPublica)

🇨🇳 Chinese regulators are alleging that Nvidia chips have a secret surveillance backdoor. Nvidia has denied the claims. (CNBC)

💰 Darktrace plans to invest $200 million into its U.S. business next year as it looks to compete with Palo Alto Networks and CrowdStrike. (Financial Times)

@ Hackers and hacks

🗣️ The ShinyHunters extortion group has been targeting a variety of companies, including Qantas, Allianz and Adidas, in voice phishing attacks. (BleepingComputer)

🫠 Hackers stole bank account information, student loan data, class schedules and more information about students and alumni spanning at least three decades in a recent data breach at Columbia University. (Bloomberg)

💥 The SharePoint hacks are just the start: Beijing is increasingly investing in ways to maintain stealthy, persistent access to U.S. systems. (Axios)

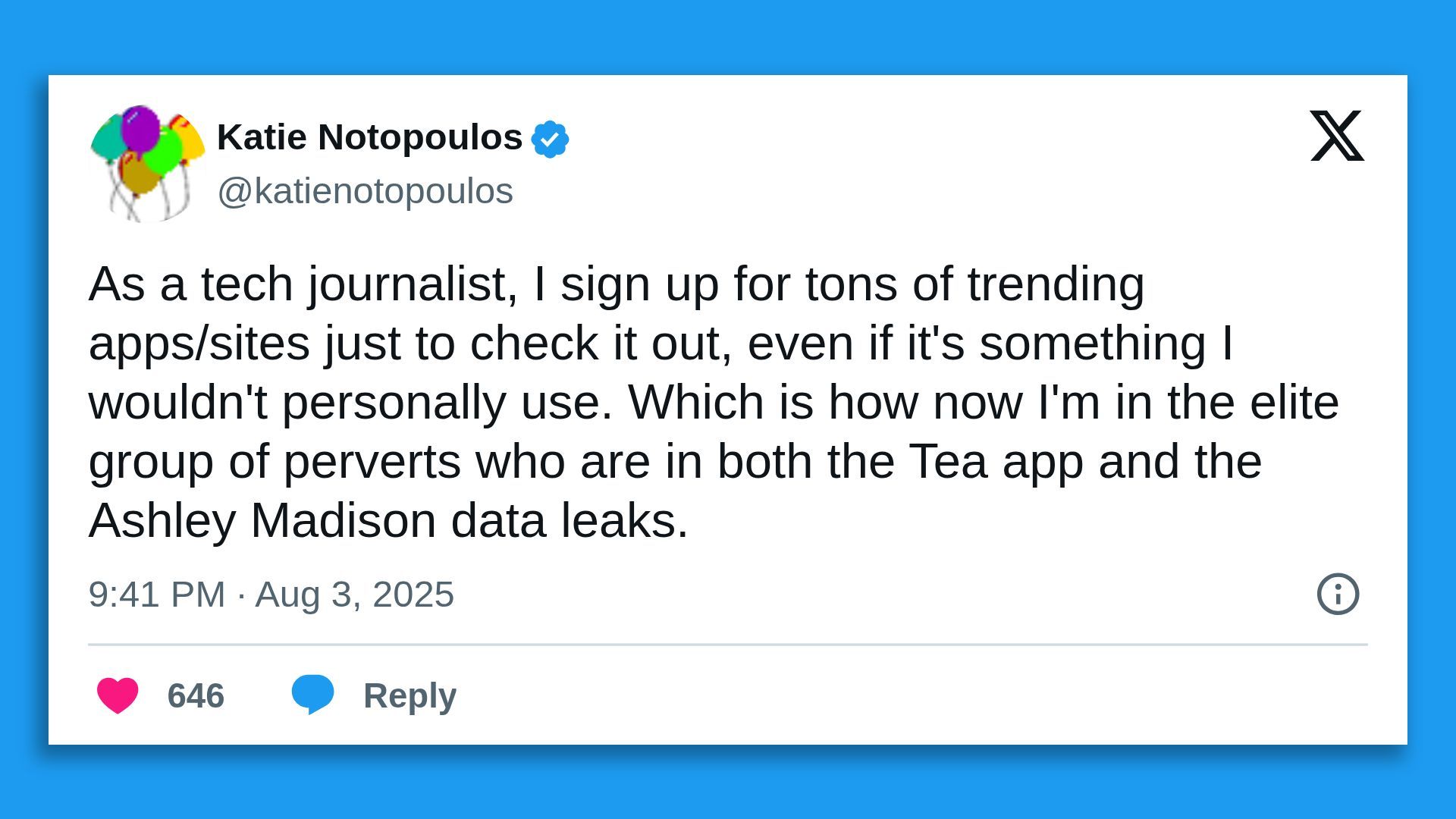

6. 1 fun thing

Send good vibes to the tech reporters in your life in a similar boat as Katie.

☀️ See y'all next week!

Thanks to Dave Lawler for editing and Khalid Adad for copy editing this newsletter.

If you like Axios Future of Cybersecurity, spread the word.

Sign up for Axios Future of Cybersecurity