Axios Future

October 05, 2019

Welcome back to Future. Let me know what you think about this issue, and what I should write about in the coming weeks. Just hit reply or send me a note at [email protected]. Erica, who writes Future on Wednesdays, is at [email protected].

Here we go! Today's letter is 1,714 words, which should take about 6 minutes to read.

1 big thing: Medtech's quick-fix addiction

Illustration: Eniola Odetunde/Axios

Some technologists look at the pileup of crises weighing down American health care — overworked doctors, overpriced treatments, wacky health record systems — and see an opportunity to overhaul the industry, which could save lives and make them money.

Yes, but: There's frequently a chasm between can-do engineers itching to rethink health care and the deliberate doctors and nurses leery of tech that can make their lives more complicated, or worse, harm their patients, Axios health care business reporter Bob Herman and I report.

What's happening: Last year, investors handed more than $8 billion to health care tech startups. But the other end of the pipe is mostly dry — relatively few products have been integrated deeply into the labyrinthine medical system. Those that are often focus on sideshows like wellness, instead of core issues like caring for the chronically ill.

- "People are doing things because they can," rather than to address a need, says Ethan Weiss, a cardiologist at UC San Francisco who advises startups.

- Even thoughtful, relevant solutions run into a money mess — persuading a critical mass of hospitals or insurance plans to shell out for every shiny new tech isn't easy.

- "There's not a way while you're still a small company to get enough income flow to survive," says Paul Yock, director of the Stanford Center for Biodesign.

High-profile flameouts show what happens when medicine and technology are totally out of sync.

- IBM's Watson AI system was meant to upend cancer care at MD Anderson. It failed, at times offering "unsafe and incorrect" recommendations, and the partnership was put on ice.

- An AI startup spun out of Memorial Sloan Kettering Cancer Center is dogged with accusations that it quietly profited off patient data.

- Driver, a startup that aimed to connect cancer patients with clinical trials and treatments, quickly shuttered last year because its expensive services didn't fit in the system.

- Theranos and uBiome are their own unique disasters.

At the root of some failures is the way developers approach health care. Some take it on like any other technical puzzle, when it can be orders of magnitude more complex.

- Many startups are made up of "a bunch of brilliant people, very arrogant, who think they're going to call a nurse for a few hours" or swing by a clinic and then build a product, says Ann Farrell, a longtime health care IT consultant. The result: solutions that don't fit the problem.

- More broadly, the economics of U.S. health care are hopelessly tangled and wasteful — a political problem that won’t succumb to a technical fix.

Why it matters: "If your website comes down, well, OK, you figure it out," says David Shaywitz, a Silicon Valley investor with a medical degree. "If you're promising, 'This is how we're going to figure out the dose of insulin you need,' and you're off, you can kill somebody."

The other side: Some firms have made inroads without isolating clinicians.

- One Medical, which operates primary care clinics through memberships and as an employer benefit, is expanding and has built its own electronic health record.

- Large health insurers have adopted and invested in Omada Health, which provides online counseling for people who have chronic diseases.

- "Love it or hate it, there are some immutable laws of physics in the U.S. health care system. There are some things that you just need to figure out how to fit inside," says Sean Duffy, Omada's co-founder, who spent time in medical school.

The bottom line: "Most of these health care startups are gonna go belly up because the health care delivery system is not all that easily disrupted," says Bob Wachter, head of UCSF Department of Medicine.

- The firms that will resonate likely will be designed by or have direct feedback from the doctors, nurses and technicians who will use the technology but were trained to think of patients first.

- "You have to be in the clinical environment," says Raj Ratwani, a health IT expert at Medstar Health.

2. Wrestling over the black box

Illustration: Sarah Grillo/Axios

We're seeing the beginnings of a tug-of-war at the highest levels of government over how much access people should have to AI systems that make critical decisions about them.

What's happening: Life-changing determinations, like the length of a criminal's sentence or the terms of a loan, are increasingly informed by AI programs. These can churn through oodles of data to detect patterns invisible to the human eye, potentially making more accurate predictions than before.

Why it matters: The systems are so complex that it can be hard to know how they arrive at answers — and so valuable that their creators often try to restrict access to their inner workings, making it potentially impossible to challenge their consequential results.

Driving the news: Two recent proposals are pulling in opposite directions.

- A bill from Rep. Mark Takano, a California Democrat, would block companies that design AI systems for criminal justice from withholding details about their algorithms by claiming they’re trade secrets.

- A proposal from the Department of Housing and Urban Development (HUD) would protect landlords, lenders and insurers that want to use algorithms for important determinations, shielding them from claims that the algorithms unintentionally have a more negative impact on certain groups of people.

These are among the earliest attempts to set down rules and definitions for algorithmic transparency. How they shake out could set rough precedents for how the government will approach the many future questions that will emerge.

Proponents of more access say it's vital to test whether walled-off systems are making serious mistakes or unfair determinations — and argue that the potential for harm should outweigh companies' interest in protecting their secrets.

- Developers regularly invoke trade-secret rights to keep their algorithms — used for key evidence like DNA matches or bullet traces — away from the accused, says Rebecca Wexler, a UC Berkeley law professor who consulted on Takano's bill.

- "We need to give defendants the rights to get the source code and [not] allow intellectual property rights to be able to trump due process rights," Takano tells Axios. His bill also asks the government to set standards for forensic algorithms and test every program before it is used.

The HUD proposal would require someone to show that an algorithmic decision was based on an illegal proxy, like race or gender, in order to succeed in a lawsuit. But critics say that can be impossible to determine without understanding the system.

- "By creating a safe harbor around algorithms that do not use protected class variables or close proxies, the rule would set a precedent that both permits the proliferation of biased algorithms and hampers efforts to correct for algorithmic bias," says Alice Xiang, a researcher at the Partnership on AI.

- HUD is soliciting comments on the proposal until later this month.

The other side: "The goal here is to bring more certainty into this area of the law," said HUD General Counsel Paul Compton in an August press conference. He said the proposal "frees up parties to innovate, take risks and meet the needs of their customers without the fear that their efforts will be second-guessed through statistics years down the line."

3. The two-faced freelance economy

Illustration: Sarah Grillo/Axios

Some freelancers can pull in more than $100 an hour for management consulting, programming or graphic design. Others struggle to make much more than $10 an hour, beholden to "gig work" platforms like Uber or TaskRabbit.

Why it matters: Being one's own boss, with the flexibility it brings, can be lucrative for people who can differentiate themselves from competitors. For the rest, it can be quicksand.

The big picture: Freelance work makes up nearly 5% of U.S. GDP, according to a new study commissioned by Upwork, a site for high-earning freelancers to find jobs. And more people than ever — 28.5 million people, or half the freelance workforce — say it's a long-term plan.

- Freelancers who are "significantly better than average" at their jobs tend to do well, says Stephane Kasriel, Upwork's CEO. "Stronger pros can really dictate their terms."

- Gig work apps capitalized on this dream to attract millions to their platforms: Work whenever you want to make some spending money on the side, they promised.

But for those without a rare or standout skill, reality hasn't quite panned out that way.

- "People turn to this work, but it's not as lucrative as they think it's going to be," says Alexandrea Ravenelle, a UNC professor who interviewed dozens of workers for her recent book, "Hustle and Gig."

- "I'm finding a considerable number of workers end up turning to gig work, they think, for the short term — and they're still doing it 4 years later," Ravenelle says. There's no time to network or send out resumes when you're spending every working moment hunting for the next job.

The bottom line: "Given that being in the traditional workforce typically comes with benefits and protections, I think most workers would be better off being there rather than having to constantly hustle for the next gig," says Ravenelle.

4. Worthy of your time

Illustration: Aïda Amer/Axios

All eyes on U.S.–China trade talks (Dion Rabouin - Axios)

Google's hunt for "darker skin tones" takes questionable turn (Ginger Adams Otis & Nancy Dillon - NY Daily News)

Deportations in the surveillance age (McKenzie Funk - NYT Magazine)

The rise of the financial machines (The Economist)

Attack of the kamikaze drones (Joshua Brustein - Bloomberg)

1 fun thing: AI tries word games

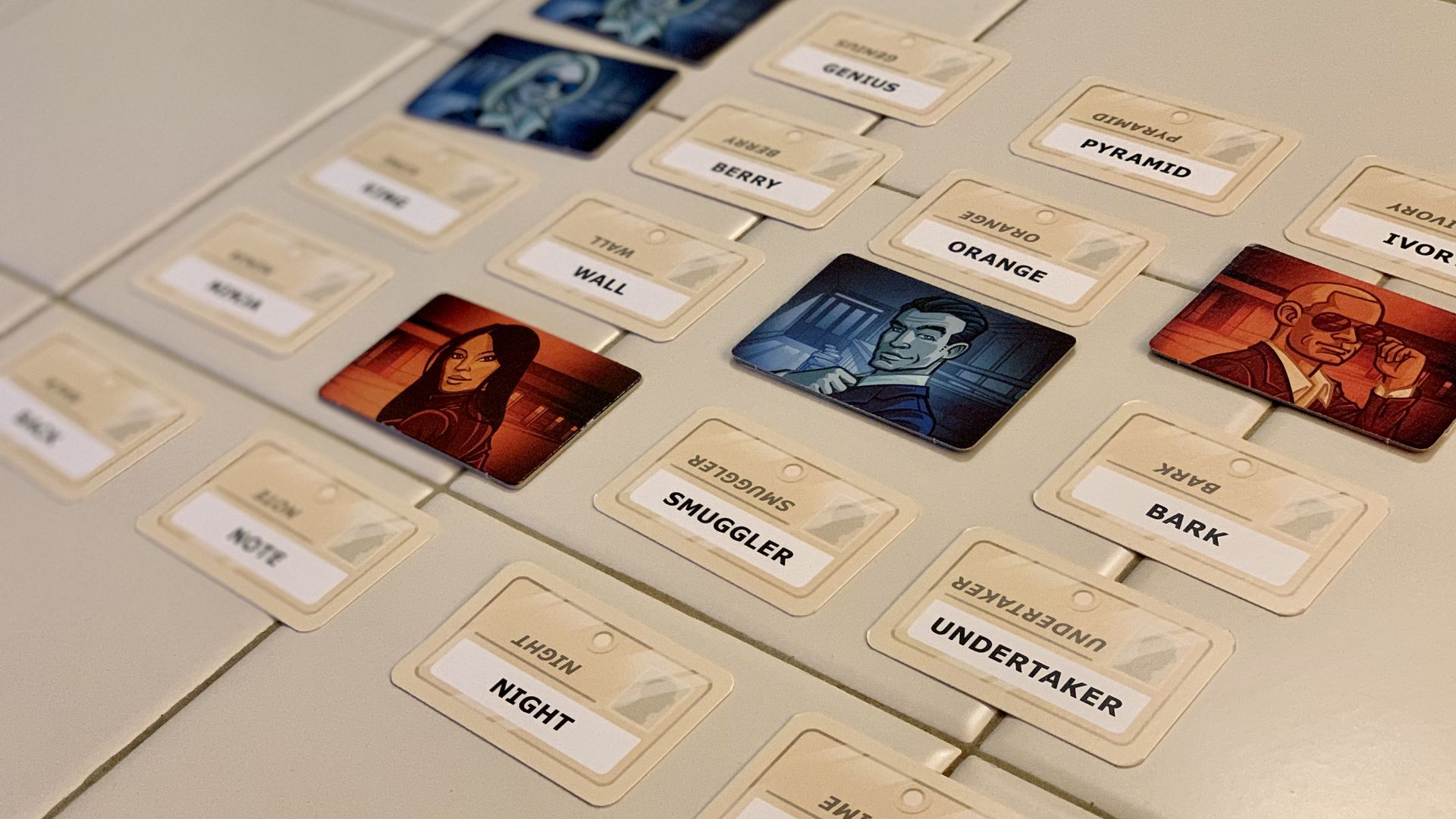

A game of Codenames. Photo: Kaveh Waddell/Axios

It's one thing to play chess against a computer — you'll lose — but it's another entirely to play a collaborative word game. That stretches the limits of today's AI.

What's happening: Game geeks are trying to create bots that can play Codenames, the super-popular word guessing game.

- It's played with two teams and a board with 25 words, like in the photo above.

- Each turn, one person tries to come up with a single-word clue linking as many of the 25 words as possible; then, that person's teammates try to guess as many words as they can.

Giving a good clue is pretty easy for computers, using basic open-source machine learning tools for language understanding.

- "I was surprised at how well it worked," said David Kirkby, an astrophysicist at UC Irvine who programmed a Codenames bot for fun. "It came up with clues which weren't obvious to me — but they made sense."

- Kirkby's bot once gave the clue "Wrestlemania" to connect the words "undertaker" and "match."

- Another bot coded by Jeremy Neiman, an engineer at Alphabet's Sidewalk Labs, used "telemedicine" to connect "ambulance," "hospital," "link" and "web."

What's really hard is guessing whether your teammates will understand your clue. This is something humans are great at — if you're playing with a sibling or friend, you can draw on shared experiences to come up with the perfect word.

- The next big step is to create bots that develop an understanding of their teammates over the course of several games, says Adam Summerville, a professor at CalPoly Pomona who hosts Codenames AI competitions.

- Achieving this goal is key to making robots that communicate better with people to accomplish a shared task.

Sign up for Axios Future

Spot the mega-trends impacting our world