Axios AI+

December 22, 2025

Welcome to our penultimate AI+ newsletter of 2025. Another wild year.

Today's AI+ is 1,105 words, a 4-minute read.

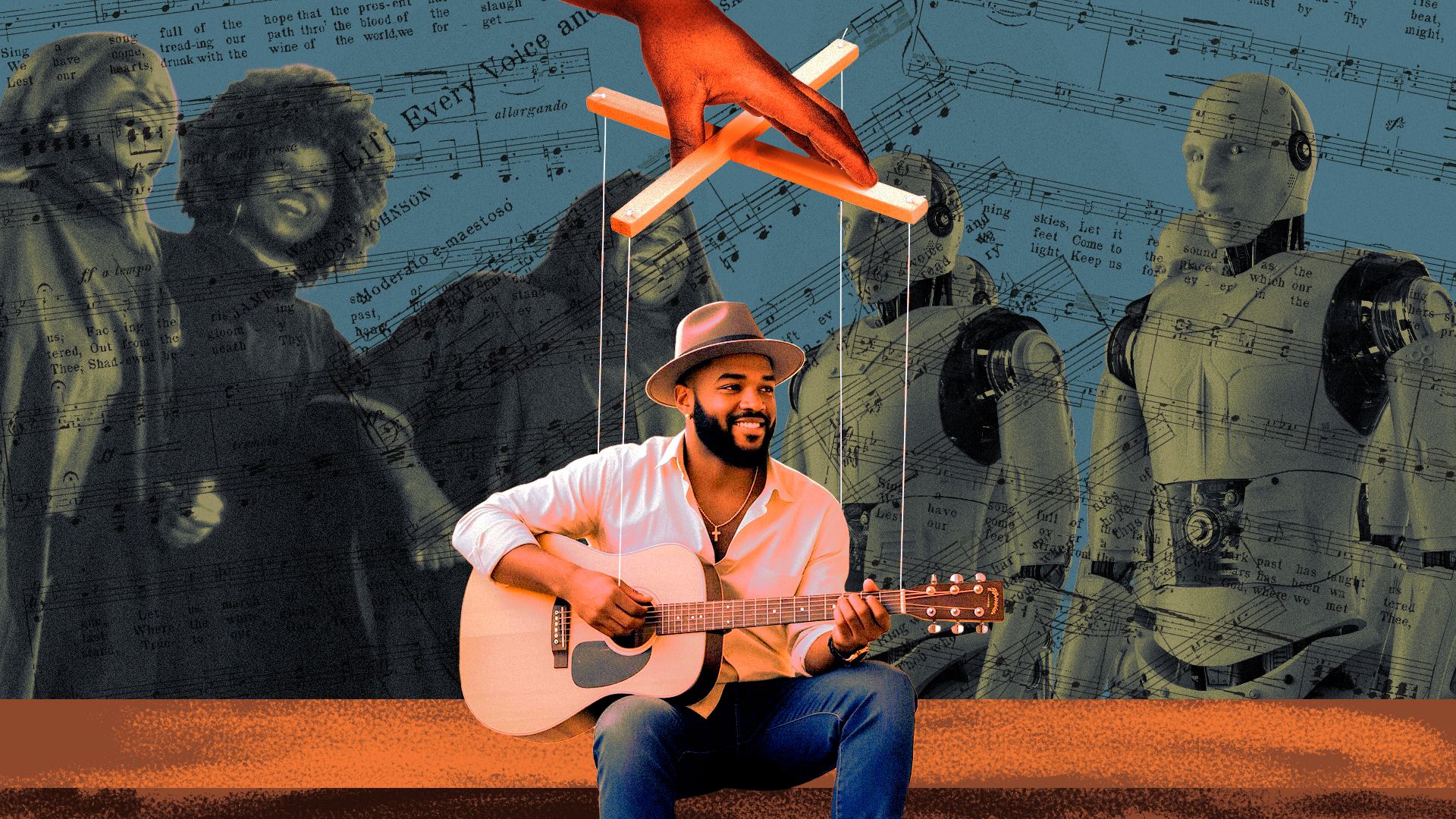

1 big thing: AI gospel singer tops Christian charts

An AI-generated soul singer named Solomon Ray has climbed Christian charts, hitting No. 1 on Billboard's gospel digital song sales and Apple Music's Christian song lists.

Why it matters: As a wholly invented Black Christian artist, Solomon Ray raises questions about authenticity, race and faith, as well as the future of music.

Zoom in: According to the artist's Instagram account, Solomon Ray has surpassed 7 million streams across various platforms.

- The artist's YouTube account has also generated more than a million views for his songs.

Context: The artist is the brainchild of Christopher "Topher" Townsend — a Mississippi-based MAGA rapper, conservative activist and former Air Force cryptologic analyst.

- Townsend, who is Black, launched the Solomon Ray project earlier this year, using generative AI tools to build the singer's voice, persona, lyrics and production.

Townsend told Axios through Solomon Ray's Instagram account that the response to his creation has been overwhelming.

- "I've received thousands of messages from listeners who feel seen, comforted, and spiritually lifted by his songs," he wrote.

- "My intention has always been to uplift, not replace; to add to the richness of gospel music, not subtract from its legacy."

- He said that Gospel music belongs to everyone "who reveres it, respects it, and approaches it with sincerity," and that his creation "is a musical project, not a political puppet."

State of play: Solomon Ray's success comes at the same time as two of the hottest songs in country music were also created by AI-generated artists, Breaking Rust and Cain Walker.

- Breaking Rust last month had the No. 1 song on the Billboard country digital song sales chart with the single "Walk My Walk."

- Cain Walker's "Don't Tread On Me" came in at No. 3 on the same chart.

What they're saying: "You can have complete virtual performers ... deepfake videos, AI voices. It's unsettling because you can construct an entire artist from scratch," James Grimmelmann, Cornell Tech professor of digital and information law, told Axios.

- Grimmelmann said AI music also raises difficult cultural questions about marginalized groups being excluded from training data.

- "What once required weeks of production and millions of dollars can now be generated on a laptop — and updated in real time."

Yes, but: Rev. Chris Hope, founder of the Boston-based Hope Group, a church consulting firm, told Axios that churches have been using synthesizers and electronics in music, and AI is just an extension of that.

- "It should never substitute for human story or human spirit. I don't mind AI artists existing, but I mind that we forget the difference."

- Hope added that Black gospel music is rooted in the tradition of testimony from real people. "If you've never been born, how can you be born again? If there's no authentic witness, then what are you really listening to?"

The bottom line: Mia Moody-Ramirez, a Baylor University journalism professor writing a book on digital blackface, tells Axios that AI music is another way to appropriate and commodify Black people and make money.

- Digital blackface occurs when non-Black users exploit Black people online, often relying on stereotypes. Without documentation, Moody-Ramirez said, vast amounts of offensive or inappropriate AI content may disappear before society fully confronts it.

2. "The new imaginary friend"

Screens are winning kids' time and attention, and now AI companions are stepping in to claim their friendships, too.

Why it matters: The AI interactions kids want are the ones that don't feel like AI, but instead feel human. That's the kind researchers say are the most dangerous.

State of play: When AI says things like, "I understand better than your brother ... talk to me. I'm always here for you," it gives children and teens the impression they not only can replace human relationships, but they're better than a human relationship, Pilyoung Kim, director of the Center for Brain, AI and Child, told Axios.

The latest: Aura, the AI-powered online safety platform for families, called AI "the new imaginary friend" in its new State of the Youth 2025 report.

- Children reported using AI for companionship 42% of the time, according to the report.

- Just over a third of those chats involve violence, and half the violent conversations include sexual role-play, the survey responses show.

AI companies are exploiting children, some parents say.

- Parents of a 16-year-old who died by suicide testified before Congress this fall about the dangers of AI companion apps, saying they believe their son's death was avoidable.

- A Texas mom is suing Character.AI, saying her son was manipulated with sexually explicit language that led to self-harm and death threats.

Even with safety protocols in place, Kim found while testing OpenAI's new parental controls with her 15-year-old son that it's not hard to skirt protections by simply opening a new account and listing an older age.

OpenAI told Axios it's in the early stages of an age prediction model, in addition to its parental controls, that will tailor content for users under 18.

- "Minors deserve strong protections, especially in sensitive moments. We have safeguards in place today, such as surfacing crisis hotlines, guiding how our models respond to sensitive requests, and nudging for breaks during long sessions, and we're continuing to strengthen them," OpenAI spokesperson Gaby Raila told Axios in an emailed statement.

Character.AI, which removed open-ended chat for kids under 18, similarly is using "age assurance technology."

- "If the user is suspected as being under 18, they will be moved into the under-18 experience until they can verify their age through Persona, a reputable company in the age assurance industry," Deniz Demir, head of safety engineering at Character.AI, told Axios in an emailed statement. "Further, we have functionality in place to try to detect if an under-18 user attempts to register a new account as over-18."

What we're hearing: "I would not want my kids, who are 7 and 10, using a consumer chatbot right now without intense parent oversight," Erin Mote, CEO of InnovateEdu and EdSafe AI Alliance.

3. N.Y. governor signs sweeping AI safety bill

Gov. Kathy Hochul (D) signed the RAISE Act into law, making New York the latest state to have broad safety rules for the most advanced AI models.

Why it matters: New York and California are setting de facto safety rules for frontier AI companies in the U.S. as Congress struggles to settle on federal standards.

4. + This

I have very much enjoyed creating AI images of ornaments, buttons and keychains with my face on them with ChatGPT images and the Remix feature in Google Photos. How long before someone creates a business 3D printing these?

Thanks to Matt Piper for copy editing this newsletter.

Sign up for Axios AI+