Women's deepfake lawsuit targets AI porn industry

Add Axios as your preferred source to

see more of our stories on Google.

Illustration: Natalie Peeples/Axios

Three women, including a Kansas City native, say in a lawsuit that pornographic videos depicting their likenesses circulated online after AI platforms were used to create sexual content based on their Instagram posts.

The big picture: A new exploitative industry is harnessing AI technology to generate explicit "deepfakes" from PG social media photos, the lawsuit says.

- Attorneys, legal experts and policymakers tell Axios that existing laws don't do enough to stop bad actors from using real people's images to fabricate sexual content.

Catch up quick: In January, the women sued several men and companies in Arizona state court under the state's revenge porn law because Arizona, like most states, has yet to ban the creation of nonconsensual sexual deepfakes altogether.

- The lawsuit says three of the men created a company that helps subscribers create AI porn, make money from it, and avoid legal repercussions.

- The defendants have not yet responded to the lawsuit, and Axios was unable to reach them for comment.

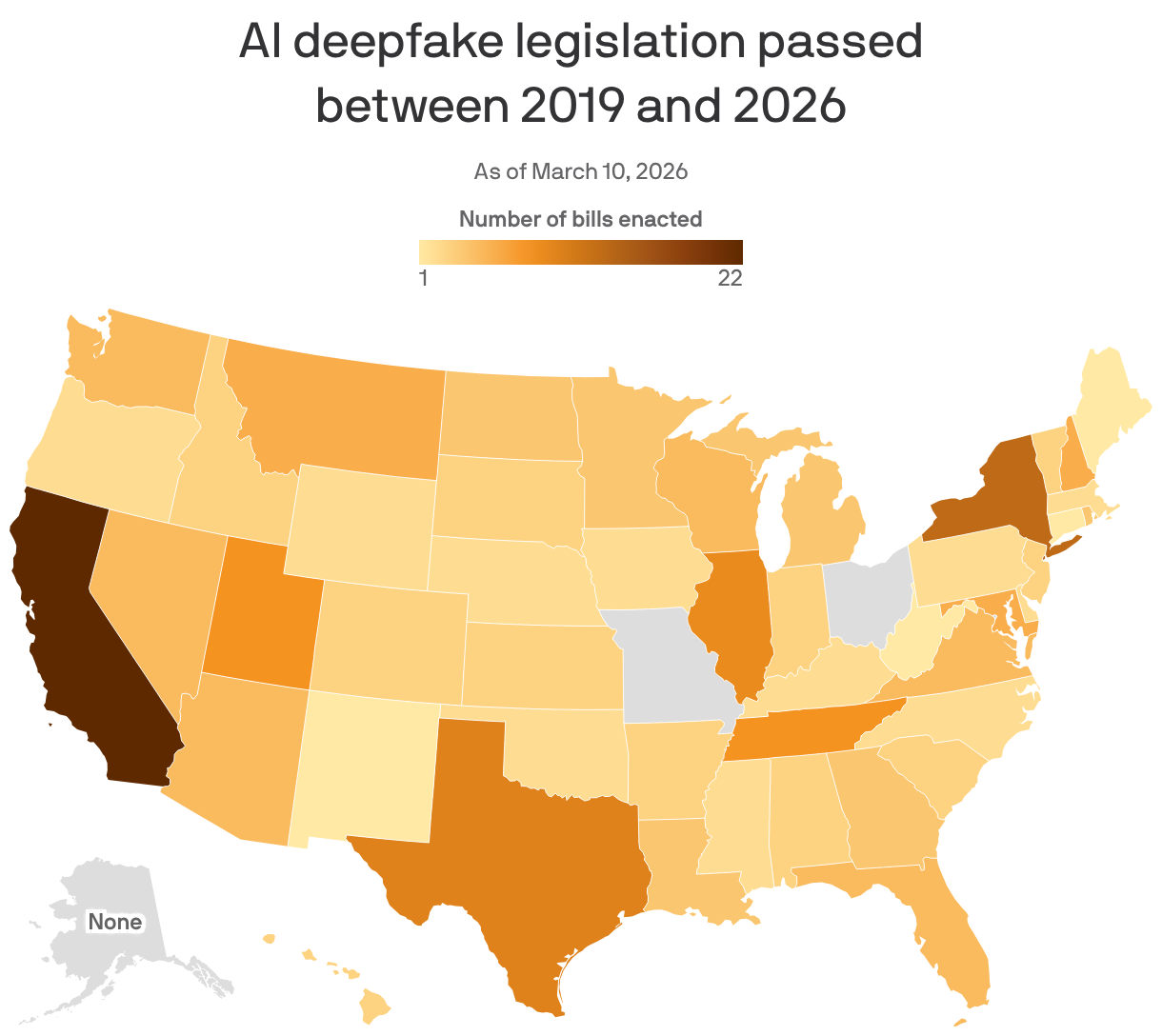

Zoom out: Nearly every state has enacted legislation targeting AI deepfakes in recent years, according to Ballotpedia, but the laws vary widely and many remain narrowly focused on election misinformation or child exploitation.

- Congress has also stepped in. The Take It Down Act, passed last year, makes posting nonconsensual images a federal crime and requires platforms to remove them within 48 hours.

Zoom in: Missouri is one of three states that haven't passed any deepfake laws since generative AI took off, with state lawmakers introducing hundreds of versions in recent years without success.

- Several bills so far this year seek to target nonconsensual deepfakes, including SB 1012, which makes posting or threatening to post deepfakes a felony and puts the responsibility on the people controlling the AI.

What they're saying: "Putting guardrails around AI is bipartisan in nature," Sen. Doug Beck (D-Affton), the bill's co-sponsor, tells Axios.

- "My fear is, if we don't do anything, it's going to run over us," he says. "It gets so realistic, it's scary, and they're hurting innocent people."

Reality check: Most women, including the plaintiffs in the Arizona case, are completely unaware they've been targeted until "it's way too damn late," and someone they know has seen the explicit content, their attorney, Nick Brand, who is based in Kansas City, tells Axios.

One of the companies named in the lawsuit, CreatorCore LLC, claims to create 1,000 "influencers" per week, Brand said.

- Co-counsel Cristina Perez said not even private Instagram accounts are safe, because sometimes it's a social media connection who swipes the photos and uploads them to the platform.

What we're watching: Even if sufficient laws are enacted, internet anonymity makes it exceedingly difficult to find the people who create and distribute illegal material, Sean Harrington, AI & Legal Tech Studio director at Arizona State University's Sandra Day O'Connor College of Law, tells Axios.

- Harrington said attorneys and investigators — and the policymakers who fund their agencies — need to prioritize thwarting and prosecuting cybercrimes.

- He said there's a lack of government understanding of how quickly AI technology is evolving and the ramifications of its misuse.

The bottom line: "[Cyber crimes are] such a big part of our lives and can have devastating consequences. Something like that could truly ruin a young woman's life," Harrington said.